Make money doing the work you believe in

SFT does not add new facts. It surfaces facts the model already had.

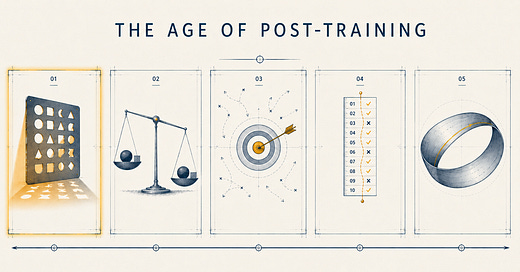

Pretraining gives the model knowledge of the world through billions of tokens of text. The capability for accurate, helpful, structured responses is already latent in the pretrained weights. SFT just shapes the model toward formats and registers humans expect.

The proof is in what doesn't work. Run SFT on a randomly initialized network and you get nothing useful. Run it on a model that has only seen one domain and you get a narrow responder.

What SFT teaches is not knowledge. It is deployment of knowledge already there.

This is why the imitation paradigm works at all. Most of the capability had been done before SFT began.

May 9

at

12:12 AM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.