The app for independent voices

𝐇𝐨𝐰 𝐀𝐈 𝐈𝐬 𝐁𝐞𝐢𝐧𝐠 𝐔𝐬𝐞𝐝 𝐭𝐨 𝐂𝐨𝐦𝐛𝐚𝐭 𝐏𝐚𝐲𝐦𝐞𝐧𝐭 𝐅𝐫𝐚𝐮𝐝

Advancements in AI capabilities can be used both to harm and to protect.

Malicious actors are increasingly using accessible and affordable AI-powered tools to automate fraud attempts and reach more victims at scale. Generative AI can improve the quality of written scams and even create deepfake videos or voice clones, making social engineering attacks far more convincing.

These techniques exploit human behavior and emotions, manipulating people into sharing sensitive information or unintentionally compromising security.

As banks have strengthened their cyber defenses in recent years, fraudsters have shifted their focus toward customers. The rise of real-time payments has further narrowed the window to detect and block fraudulent transactions, increasing the pressure on prevention systems.

____

But companies are fighting back using AI.

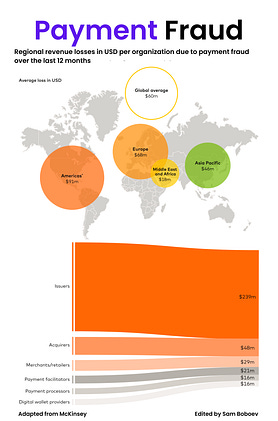

AI-powered fraud detection tools are already preventing millions of dollars from falling into the hands of criminals. According to recent surveys:

- 42% of issuers

- 26% of acquirers

say they used AI to save more than $5 million from fraud attempts over the past two years.

The rapid availability of accessible and affordable generative AI and LLM-based tools has driven widespread adoption across industries. As of early 2025, 78% of organizations were using AI in at least one business function, up sharply from 55% just two years earlier.

While adoption is still in its early stages, these technologies are already transforming how the payments industry detects, prevents, and responds to fraud.

#fintech #payment #fraud