The app for independent voices

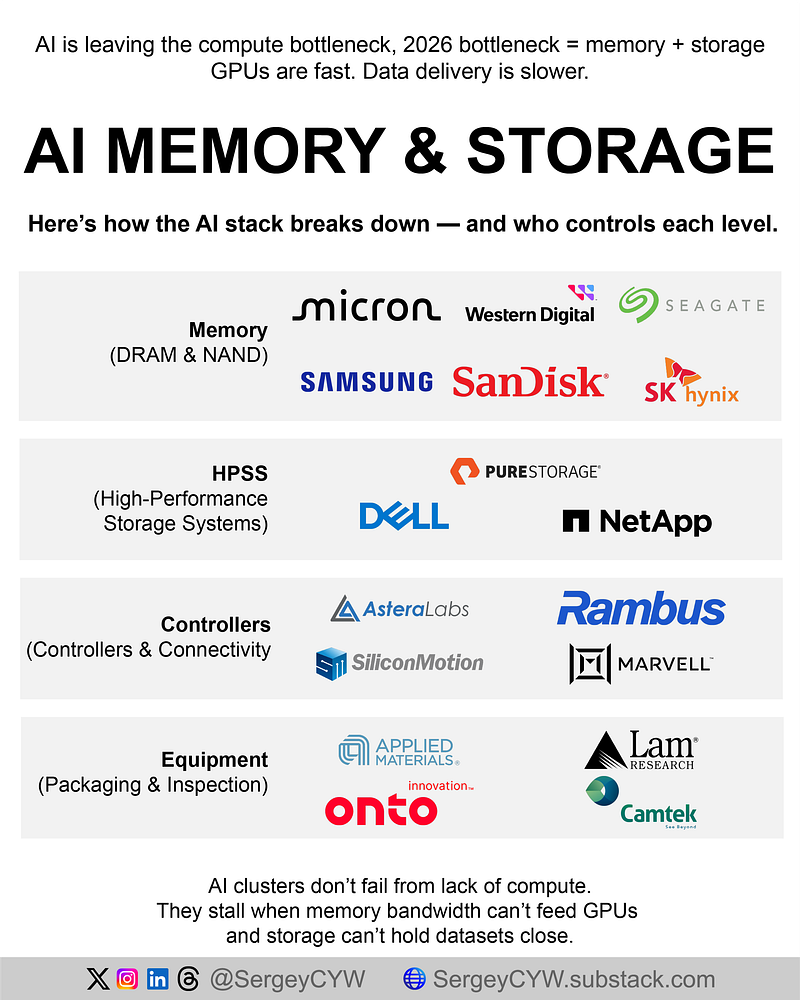

AI just hit the Memory Wall.

GPUs aren’t the bottleneck anymore. Data movement, density, and packaging decide performance. 2026 winners sit in HBM, storage, connectivity, and advanced packaging, not generic compute. 👇

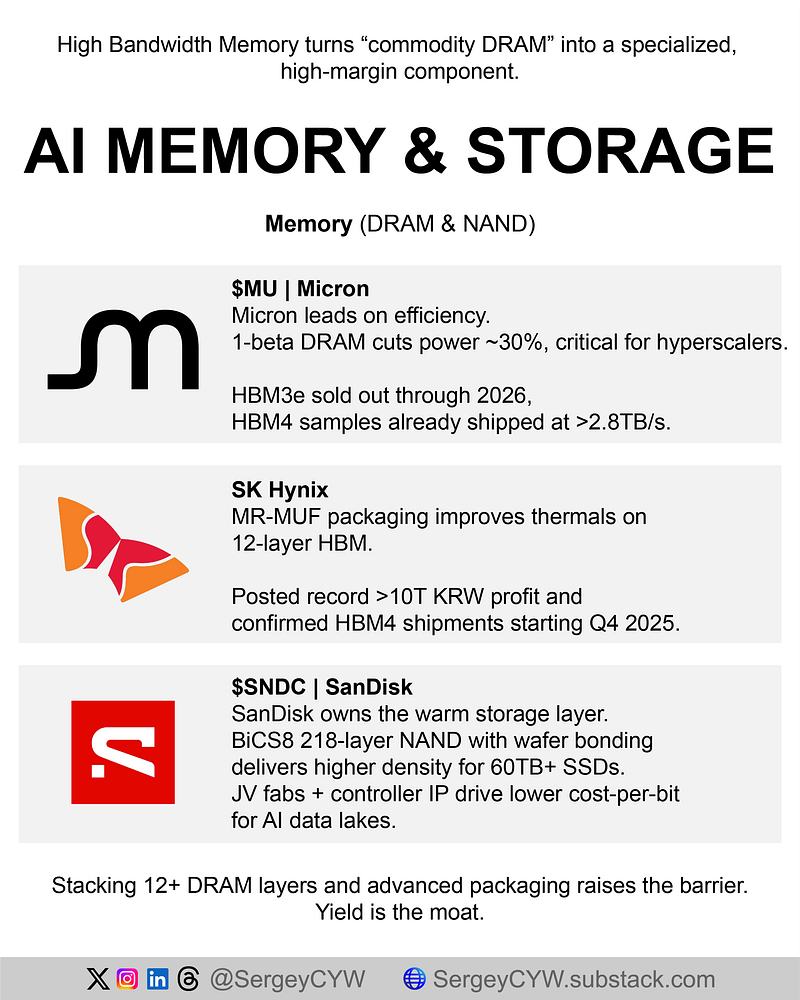

$MU — Micron HBM turns memory into a bespoke product. Micron’s edge sits in power efficiency and yield, not capacity. Management confirmed HBM3e supply fully allocated through 2026, locking revenue visibility. HBM4 samples already shipped with >2.8TB/s bandwidth, signaling execution readiness as stacks move beyond 12 layers where weak yields fail fast.

SK Hynix The closest thing to an Nvidia proxy in memory. Proprietary MR-MUF packaging solves heat at extreme stack heights, preserving yield leadership in premium HBM3e. Company posted record operating profit above ₩10T, driven by AI server memory mix. HBM4 shipments targeted for Q4 2025, defending lead time in the ultra-premium tier.

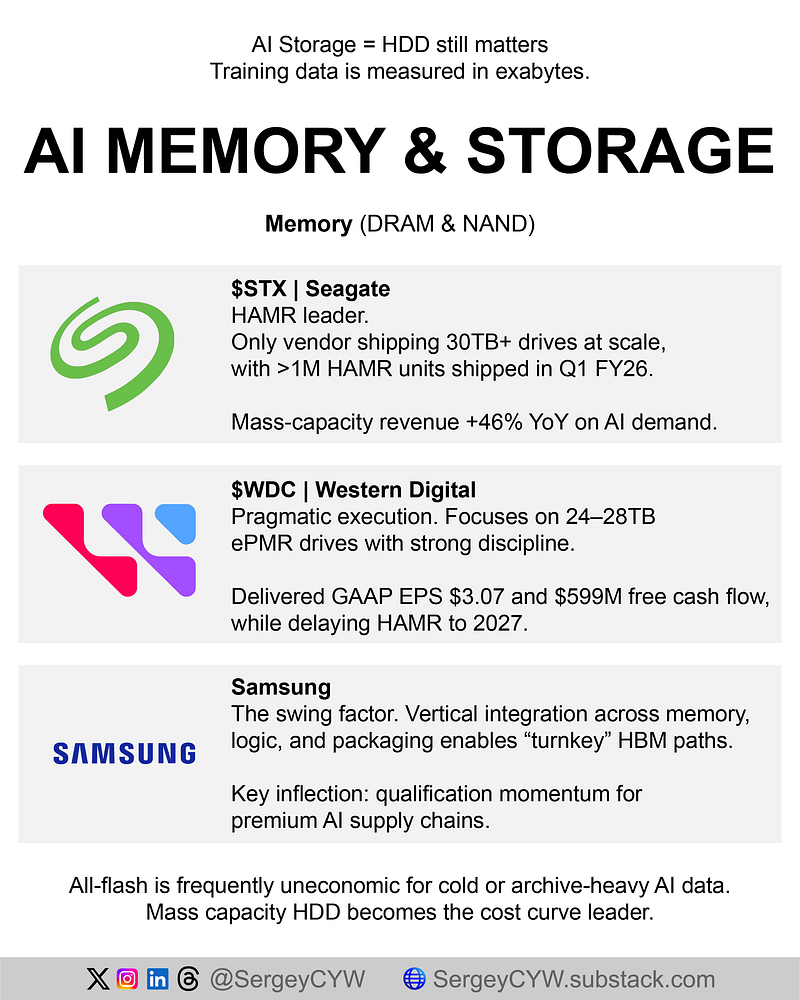

Samsung Electronics A real turnaround hinge. Samsung is the only IDM combining memory, logic, and packaging, critical for HBM4 where the base die becomes custom logic. After months of delays, Samsung passed Nvidia HBM3e qualification, reopening access to the largest AI supply chain and breaking SK Hynix exclusivity. Execution, not demand, is the variable now.

$SNDK — SanDisk Owns the AI warm storage layer. BiCS8 (218-layer) NAND uses wafer bonding to boost density without chasing extreme layer counts. Optimized for 30TB–60TB enterprise SSDs used in vector databases and RAG workloads. Active qualifications with five hyperscalers for PCIe Gen5 drives signal deployment beyond pilots into inference clusters.

$STX — Seagate Flash is uneconomic for exabyte archives. Seagate solved scale with HAMR. Shipped 1M+ Mozaic HAMR drives in a single quarter, proving manufacturability where peers lag. 30TB shipping today, path to 50TB unlocks materially lower hyperscaler TCO. AI factories need cold data stored cheaply, not instantly.

$WDC — Western Digital The pragmatic counterweight. Uses ePMR for reliable 24–28TB drives while avoiding near-term HAMR risk. Strong cash generation and internal AI yield optimization support margins in a commodity market. Risk is clear: HAMR delayed until 2027, conceding >30TB leadership to Seagate for the next cycle.

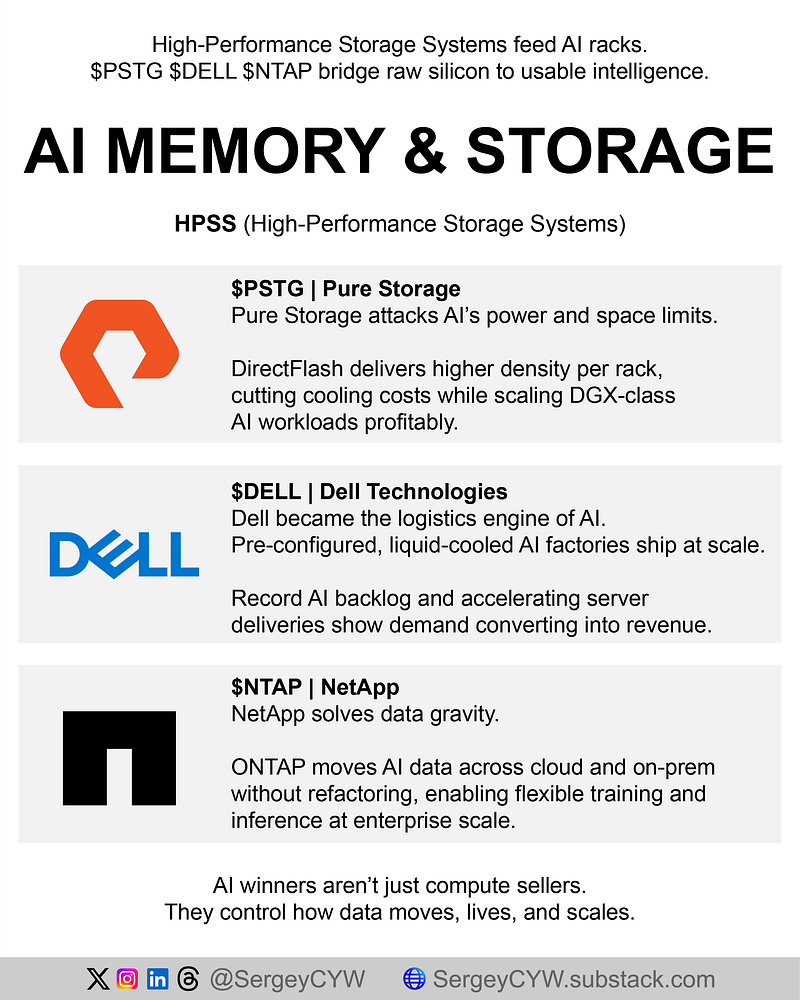

$PSTG — Pure Storage AI data centers choke on power and space. Pure attacks both with DirectFlash modules, bypassing commodity SSDs. DFMs reach 150–300TB per module, collapsing rack footprint and cooling needs. Hyperscaler shipments exceeded full-year targets early, and FlashBlade certification for DGX SuperPODs validates high-end AI training relevance.

$DELL — Dell Technologies AI adoption bottlenecked by deployment speed. Dell’s moat is logistics. “AI Factory” delivers pre-configured, liquid-cooled racks as a single SKU. AI server backlog hit $18.4B, with shipments accelerating. Enterprises buy certainty and speed, not whiteboard architectures.

$NTAP — NetApp Data gravity favors mobility. ONTAP acts as a data operating layer across cloud and on-prem, enabling train-in-cloud, infer-anywhere workflows. Embedded in all three major cloud consoles. New AFF systems tuned for AI workloads plus DGX SuperPOD certification expand relevance beyond legacy enterprise storage.

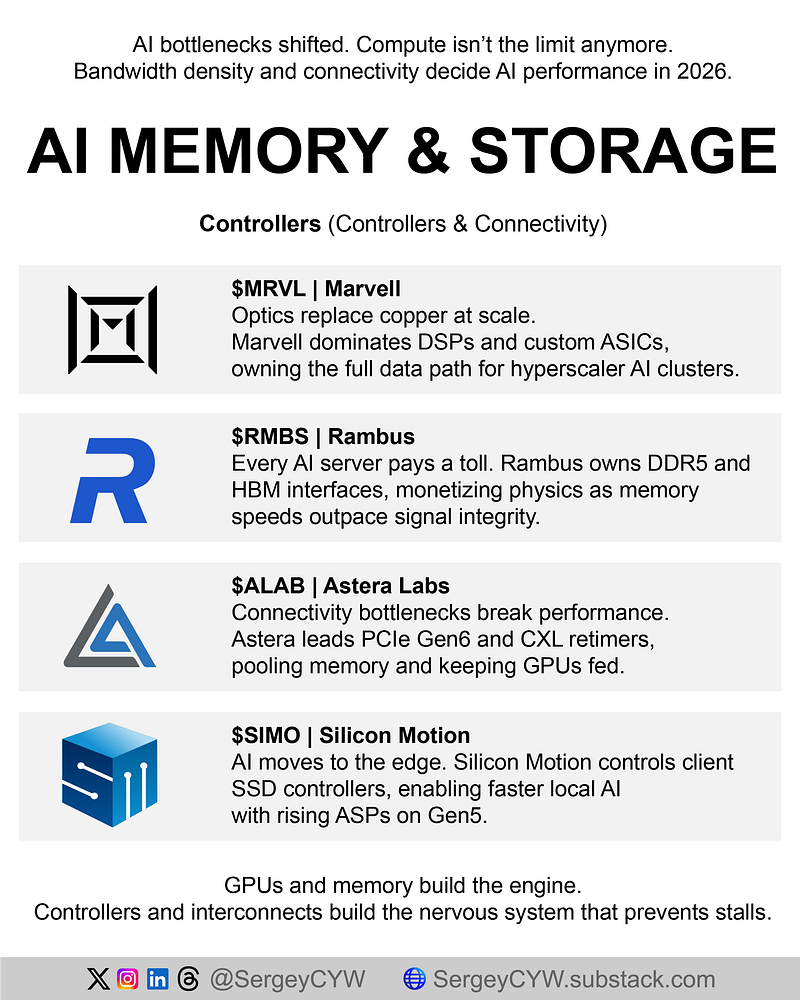

$MRVL — Marvell Copper fails at scale. Optics win. Marvell owns DSPs, Ethernet, and custom ASICs, controlling the entire data path. Data center revenue now dominates mix. Acquisition of photonics IP positions Marvell for optical compute interconnect, replacing copper inside racks for major power savings as clusters pass 100k GPUs.

$RMBS — Rambus A royalty on speed. Owns interface IP and controller chips required for DDR5 and HBM4. As speeds double, signal integrity becomes non-optional. Product revenue inflected as DDR5 surpassed DDR4. HBM4 controller licensing ties Rambus directly to Nvidia’s next platform without building memory itself.

$ALAB — Astera Labs Connectivity is the silent killer. GPUs wait on data. Astera fixes distance limits with PCIe Gen6 retimers and dominates CXL, pooling memory across servers. Revenue doubled YoY while posting software-like margins, reflecting near-zero competition. Gen6 products already exceed 20% of revenue, pulled forward by hyperscalers.

$SIMO — Silicon Motion AI moves to the edge. PCs and phones need fast local storage to run models offline. Silicon Motion controls 30–40% of client SSD controllers and benefits as PCIe Gen5 raises ASPs. Design wins across most NAND makers make SIMO the independent arms dealer in edge AI storage.

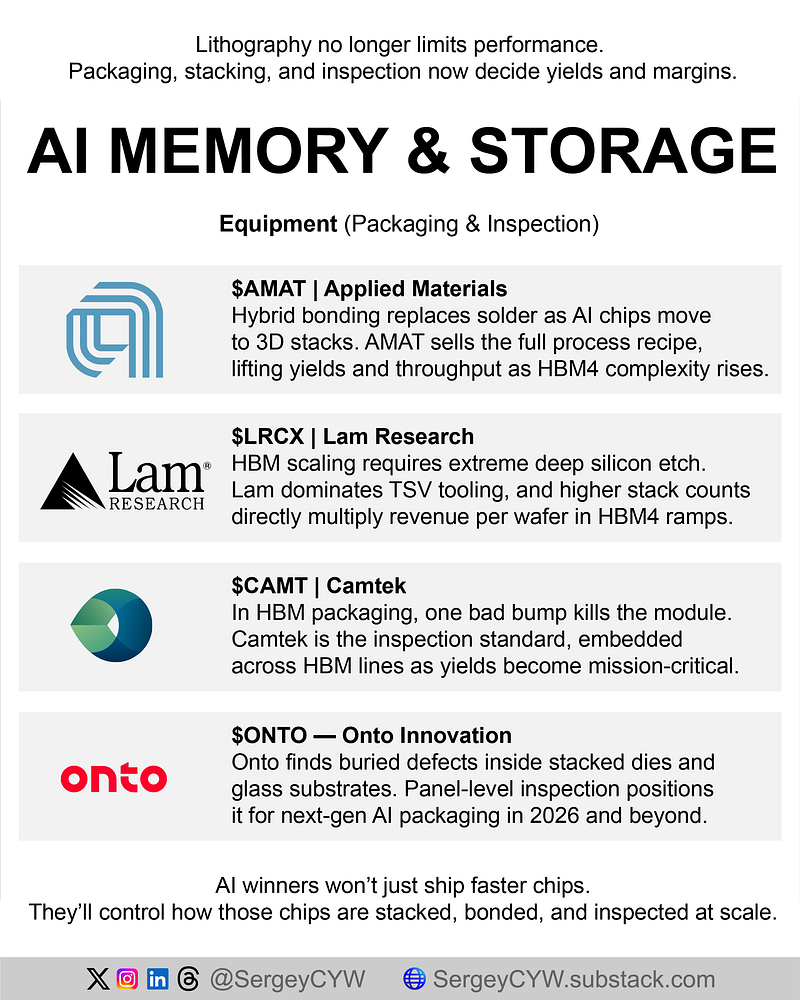

$AMAT — Applied Materials AI chips fail in packaging, not lithography. Hybrid bonding replaces solder. Applied sells the full process recipe, not just tools. Advanced packaging orders tied to HBM exceeded $1B, and new bonding platforms deliver meaningful yield and throughput gains as HBM4 stacking complexity explodes.

$LRCX — Lam Research HBM requires drilling billions of TSVs. Lam owns deep silicon etch with near-monopoly share. Cryo-etch tools enable extreme aspect ratios demanded by 12- and 16-high stacks. Management flagged HBM and advanced packaging as fastest-growing segments with visibility into 2026 pilot ramps.

$CAMT — Camtek One bad die kills a $40k package. Camtek dominates 3D bump metrology used in HBM packaging and remains tool-of-record for major memory makers. Guidance leans into late-2026 as HBM4 capacity comes online, extending a multi-year inspection upcycle.

$ONTO — Onto Innovation Finds defects others can’t see. Dragonfly systems inspect buried flaws in interposers and glass substrates used for AI packaging. Qualified by major HBM customers and shipping at scale into CoWoS lines. Strong cash generation funds continued expansion as packaging intensity rises.