The app for independent voices

AI Memory Is a Key Bottleneck — But How Could Google’s TurboQuant Change the Narrative?

Memory and storage are clearly one of the key bottlenecks in AI scaling. IDC and Gartner data suggest mainstream memory production is expected to grow 16–17% in 2026, while high-bandwidth memory (HBM) consumes 3x more wafer area per GB than standard DRAM.

At the same time, expectations are extremely high. Analysts are pricing in massive revenue growth for 2026: $MU +175%, $SNDK +171%, SK Hynix +148%, $ALAB +61%, $SIMO +46%.

A lot of this is already reflected in stock prices. Even after a strong earnings report, $MU dropped -4.4%.

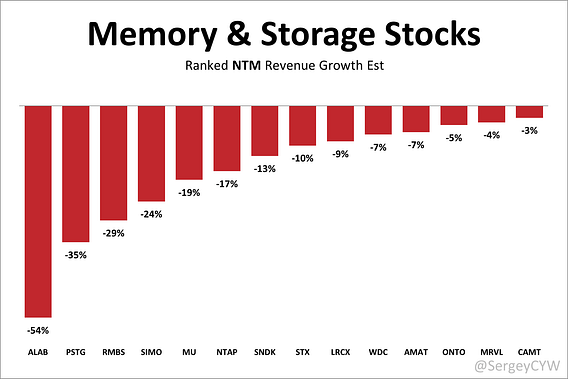

High expectations make the entire memory and storage stocks fragile.

Google announced the TurboQuant algorithm — a compression method designed to drastically reduce memory usage in large language models.

The key bottleneck in AI inference is the KV cache. TurboQuant compresses it from 16 bits down to just 3 bits per value.

The result: 6x reduction in memory footprint Up to 8x speedup on Nvidia H100 No measurable loss in accuracy

If widely adopted, this could significantly reduce expected demand for memory.

That means current growth forecasts may need to be revised.

Meanwhile, memory and storage stocks are already correcting from highs: $WDC -7% $LRCX -9% $STX -10% $SNDK -13% $NTAP -17% $MU -19% $SIMO -24% $RMBS -29% $PSTG -35% $ALAB -54%