The app for independent voices

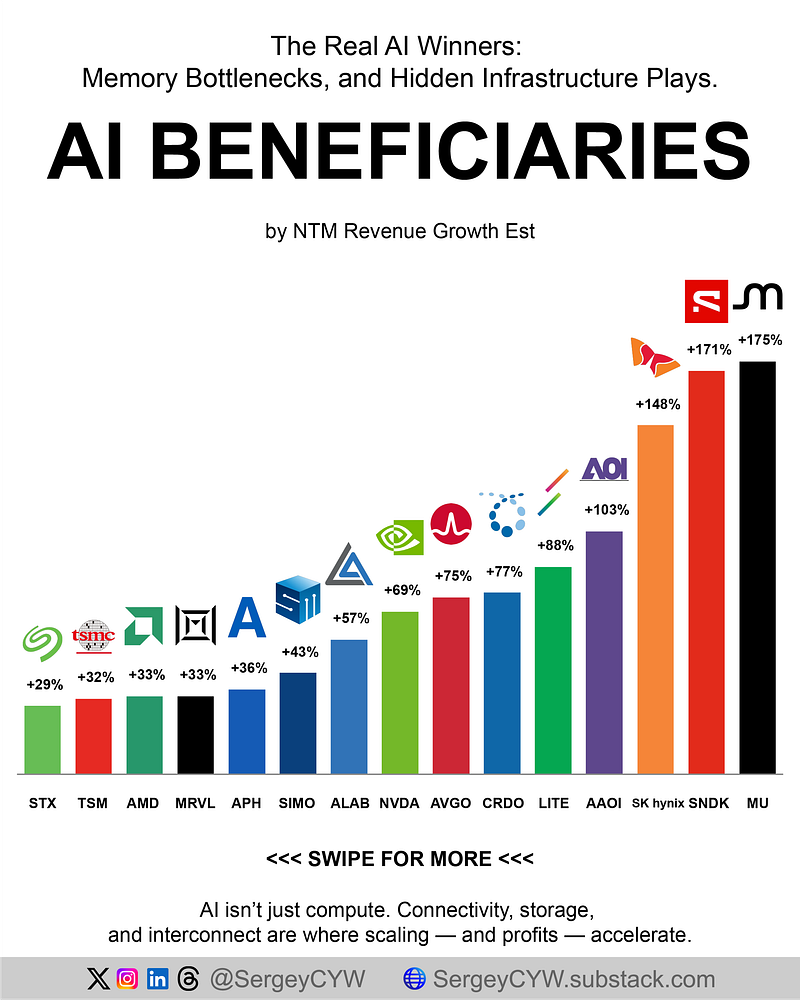

The Real AI Winners: Memory Bottlenecks, and Hidden Infrastructure Plays.

NTM growth shows where demand is flowing fastest 👇

$MU +175% NTM Micron sits at the core of AI memory. DRAM revenue reached $18.8B in one quarter, with HBM now the key driver. Ships HBM4 12-Hi stacks for next-gen accelerators under multi-year supply agreements. Cloud and data center units now dominate mix. Higher AI demand increases memory intensity per GPU, making each system more profitable. Pricing power expands as supply stays constrained.

$SNDK +171% NTM Pure-play NAND after spinoff. Focus is enterprise SSDs built on 218-layer BiCS8. AI workloads require massive storage for training datasets and checkpoints. Sequential growth in data-center SSDs accelerated as hyperscalers scaled clusters. Higher density per wafer lowers cost per TB. As AI scales, storage becomes structural bottleneck, lifting demand and margins.

SK hynix +148% NTM HBM leader supplying majority of advanced stacks for AI accelerators. HBM3E shipments drove record output, with HBM4 already locked into next-gen GPU platforms. Advanced packaging and stacking define moat. Memory sits directly on GPU die, making substitution difficult. As compute scales, memory bandwidth becomes limiting factor, reinforcing pricing strength.

$AAOI +103% NTM Vertically integrated optical supplier. Focus on 800G and 1.6T transceivers used in GPU clusters. Secured $53M hyperscaler order tied to AI deployments. Internal fabs limit supply elasticity. Demand exceeds capacity into 2027. Optical interconnect becomes critical as clusters scale. Higher AI capex translates directly into order backlog and pricing leverage.

$LITE +88% NTM Laser and photonics supplier for AI networks. Systems revenue reached $221M with strong demand from cloud customers. Capex focused on expanding optical manufacturing capacity. Strategic partnership with $NVDA to co-develop next-gen optics. As clusters scale, optical complexity increases. Each node requires more high-performance photonics.

$CRDO +77% NTM Connectivity silicon focused on AI racks. AEC cables replace optical links to reduce power cost per port. Revenue reached $407M in one quarter with strong backlog visibility. Expanding into ZeroFlap optics and OmniConnect. As clusters grow, power efficiency becomes critical. Credo benefits from shift toward lower-energy interconnects.

$AVGO +75% NTM Custom AI accelerators and networking silicon. AI revenue reached ~$8.4B with networking contributing 1/3 of mix. Tomahawk and Jericho fabrics power cluster interconnect. Multi-year supply agreements lock capacity through 2028. Hyperscalers build custom chips at scale. Broadcom monetizes both compute and network layers.

$NVDA +69% NTM Defines AI compute stack. Data center revenue $62B in a single quarter. Blackwell supply constrained, not demand. Rubin platform targets major efficiency gains in inference. Software + hardware integration creates full-stack control. Each generation increases performance and pricing. Central node in AI infrastructure spending.

$ALAB +57% NTM Purpose-built AI connectivity. PCIe retimers and CXL controllers enable rack-scale compute. Gross margin above 75%, reflecting high-value positioning. Aries and Taurus products remove bandwidth limits inside clusters. Hyperscalers pay premium for scaling efficiency. As clusters grow, connectivity becomes limiting factor.

$SIMO +43% NTM Storage controller provider embedded in SSDs. PCIe Gen5 controllers power AI inference and edge systems. SSD Solutions segment surged >100% YoY driven by GPU partnerships. New SM8008 targets enterprise AI storage. Controllers sit inside every drive. AI storage growth directly increases unit demand.

$APH +36% NTM Connectors and interconnect hardware supplier. AI-related revenue reached $3.4B in one quarter. Book-to-bill above 1.1 signals demand exceeding supply. Every server and rack requires high-density connections. As data centers scale, interconnect complexity increases. Margins expand with higher-value applications.

$MRVL +33% NTM Custom AI ASICs and networking silicon. Built $1.5B annual ASIC business from near zero. Pipeline includes 18+ design wins across hyperscalers. Expanding into 1.6T optical DSP. Positioned across compute and networking layers. AI demand drives both segments simultaneously.

$AMD +33% NTM AI GPUs + EPYC CPUs. Data center mix now dominates with CPU attach rates increasing per GPU. MI300 and upcoming platforms expand footprint. Server CPUs required alongside accelerators. As clusters grow, CPU demand scales in parallel. Balanced exposure across compute layers.

$TSM +32% NTM Manufactures nearly all advanced AI chips. 3nm and 5nm nodes dominate AI production. CoWoS packaging remains supply constrained into 2027. Capex focused on advanced nodes and packaging expansion. Every AI chip depends on TSM capacity. Bottleneck position creates pricing power.

$STX +29% NTM Nearline HDD leader storing AI datasets. 165 exabytes shipped to data centers in one quarter. Storage demand tied to training and logging. HAMR technology enables 30TB+ drives. As AI models scale, storage requirements grow exponentially. HDD remains lowest-cost solution for bulk data.