Make money doing the work you believe in

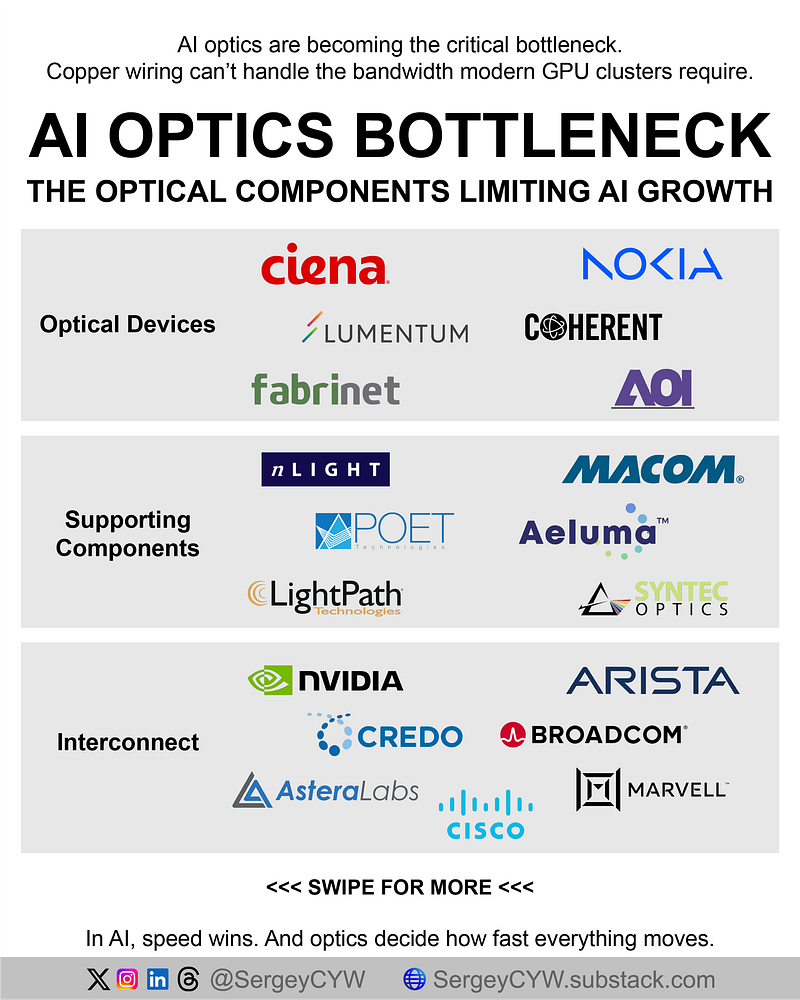

AI optics is becoming the real bottleneck in AI data centers

Copper is no longer enough. Modern GPU clusters need far more bandwidth, lower latency, and cleaner signal integrity than electrical links can reliably deliver. If optics don’t scale, GPUs sit idle, utilization drops, and AI infrastructure ROI compresses.

Optical modules, DSPs, switches, lasers, micro-optics, and coherent links now determine how fast hyperscalers can actually deploy AI capacity. Here’s the stack and the stocks tied to it. 👇

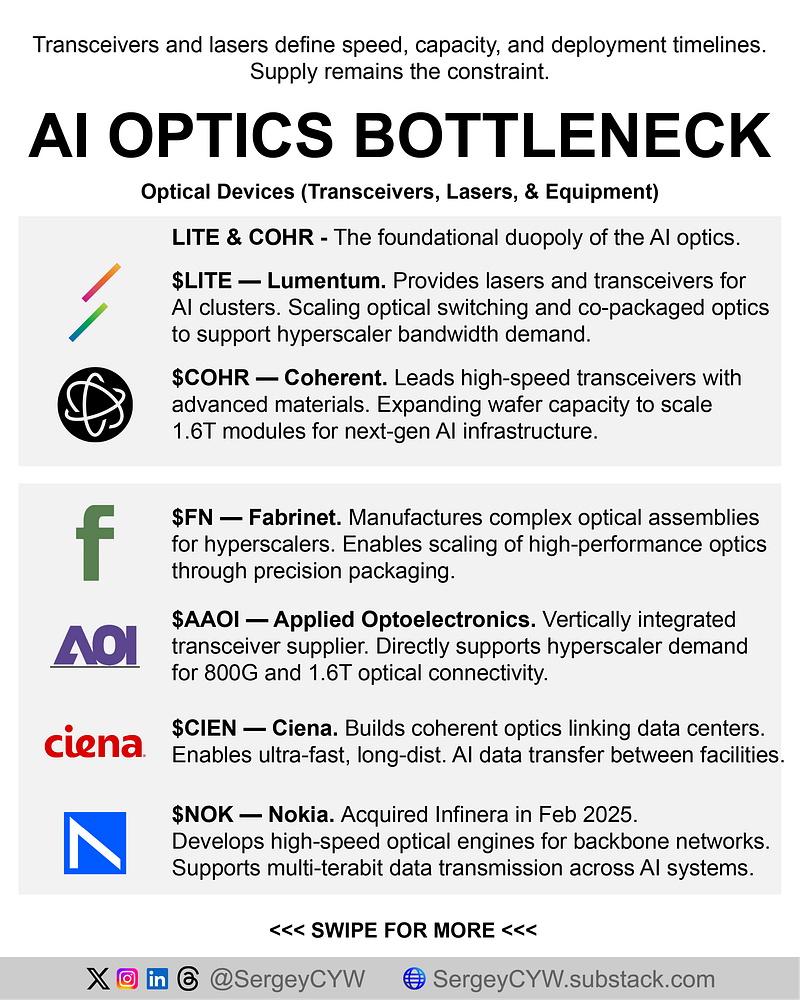

$LITE — Lumentum $LITE sits at a critical point in the optics chain, supplying transceivers and laser components that move data inside AI clusters. In fiscal Q2 2026, systems sales reached $221.8M and non-GAAP operating margin hit 25.2%. The important part is roadmap positioning: production is ramping for Optical Circuit Switches and Co-Packaged Optics, with entry into optical scale-up targeted by late 2027.

$COHR — Coherent $COHR is one of the core names in high-speed transceivers and a major beneficiary of the move to more advanced photonics. The data center segment now represents 72% of company activity, and management is doubling 6-inch Indium Phosphide wafer capacity by year-end. Why it matters: 6-inch wafers can deliver roughly 4x the chip output at less than half the cost versus legacy 3-inch formats.

$FN — Fabrinet $FN is less visible to retail investors, but it is one of the most important manufacturing enablers in AI optics. It assembles complex optical packaging for major customers across the supply chain. High-performance computing products contributed $85.6M in fiscal Q2 2026. Capacity is also expanding materially, with a new 2M square foot Pinehurst facility planned for completion in late 2026.

$AAOI — Applied Optoelectronics $AAOI is a direct read on hyperscaler demand for 800G and 1.6T modules. The company recently secured $124M in fresh orders for 800G single-mode transceivers, on top of an earlier $200M backlog tied to 1.6T products. Internal commentary suggests demand for 800G already exceeds available output through mid-2027, which makes capacity itself part of the thesis.

$CIEN — Ciena $CIEN matters because AI data centers are not just about traffic inside one building. They also need high-speed links between facilities. In fiscal Q1 2026, Ciena deployed WaveLogic 6 Extreme 1.6Tbps to 18 new customers, bringing the installed base to 90. Backlog also expanded by $2B to $7B, giving unusually strong visibility into future optical transport demand.

$NOK — Nokia $NOK strengthened its AI data center story through the Infinera deal and now has more leverage to coherent optics. The combined platform recently completed live trials using the ICE7 optical engine, built on a 5nm CMOS DSP, delivering over 140 gigabaud and up to 1.2Tbps per wavelength. One field test achieved 1Tbps across a 1,391-kilometer link, which matters for long-haul AI interconnect.

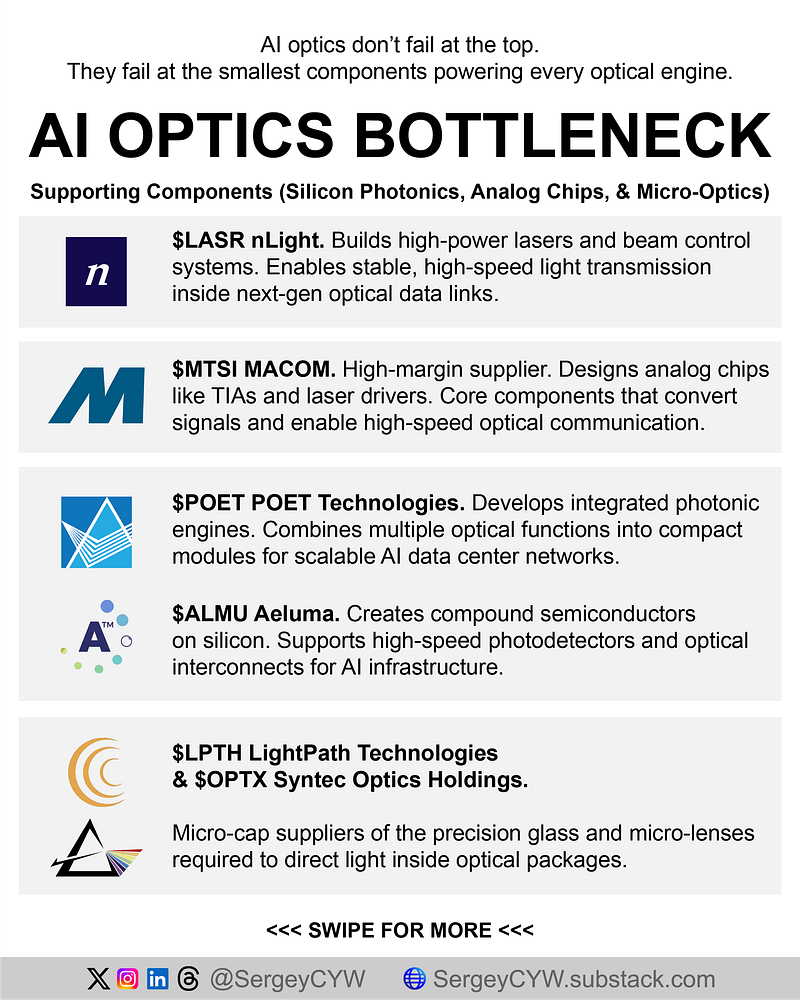

$LASR — nLight $LASR is not a mainstream AI optics stock, but its laser and beam-control technology can become increasingly relevant as optical transmission complexity rises. Q4 2025 revenue was $81.2M, with product gross margin at 37.3% and adjusted EBITDA at $10.7M. Management is intentionally shifting away from lower-value legacy markets and prioritizing higher-margin sensing and directed energy applications with more strategic optical value.

$MTSI — MACOM $MTSI provides analog building blocks inside optical modules, including laser drivers and TIAs, which are indispensable for higher-speed transceivers. In fiscal Q1 2026, data center revenue reached a record $85.8M inside $271.6M total revenue, while adjusted gross margin hit 57.6%. Management raised its data center outlook to 35–40% growth and cited accelerating demand around 1.6T optics and LPO architectures.

$POET — POET Technologies $POET is earlier-stage and higher-risk, but it targets a crucial layer: optical engines and photonic integrated circuits for AI networks. The company secured more than $225M in institutional financing to scale production and also landed a direct order above $5M for its POET Infinity optical engines. It is also developing 400G thin-film lithium niobate modulators aimed at future 3.2T engines.

$ALMU — Aeluma $ALMU is a micro-cap name tied to the materials side of future optical interconnects. Q4 2025 revenue was $1.3M, driven by R&D contracts, but the strategic angle matters more than the current size. The U.S. Navy awarded a program focused on high-speed photodetectors for optical interconnects in HPC and AI infrastructure. Aeluma also has a NASA collaboration around nonlinear optical materials integrated into silicon photonics.

$LPTH — LightPath Technologies $LPTH supplies micro-lenses and optical assemblies that help couple and direct light properly inside modules. If alignment quality is weak, performance degrades quickly. In fiscal Q2 2026, revenue reached a record $16.4M, while gross profit climbed 212% year over year to $6M. Gross margin expanded to 37%, helped by a richer mix of complex optical assemblies and integrated module-level products.

$OPTX — Syntec Optics $OPTX focuses on micro-lens arrays and precision optical components used to align lasers and fiber arrays in photonic packages. In Q4 2025, net sales were $7.5M, while gross profit doubled sequentially to $1.8M and gross margin improved from 12% to 24%. More important than current scale, several programs moved from design into pilot production, which is often the inflection point in optical component commercialization.

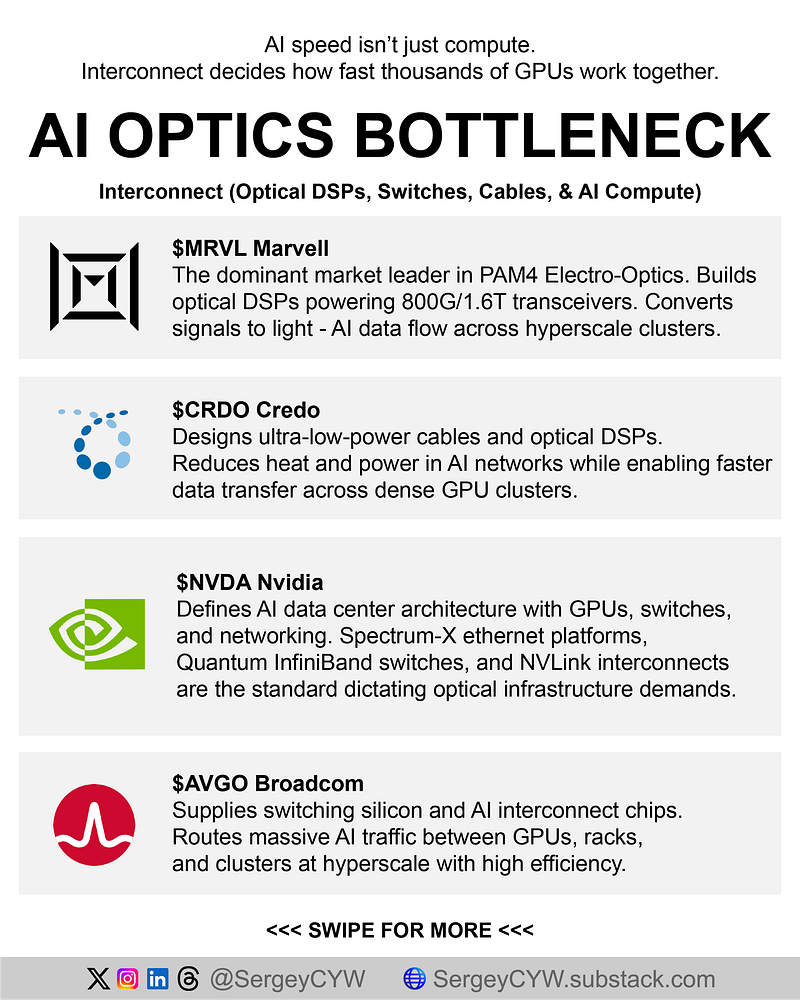

$MRVL — Marvell $MRVL is a central player because optical transceivers need high-performance DSPs to convert electrical signals into photonic output. In fiscal Q4 2026, total revenue hit $2.219B, including $1.65B from data center. Management also guided $2.4B for the next quarter with non-GAAP gross margin around 59%. The Celestial AI and XConn acquisitions deepen its exposure to optical connectivity and scale-out AI fabrics.

$CRDO — Credo $CRDO is one of the most direct plays on lower-power AI interconnects. It builds AECs and optical DSPs specifically for large-scale fabrics where power efficiency matters almost as much as speed. Fiscal Q3 2026 revenue reached $407M, with non-GAAP gross margin at 68.6%. The company also hit milestones in PCIe Gen6 AECs and launched newer DSP families tailored for next-generation AI cluster architectures.

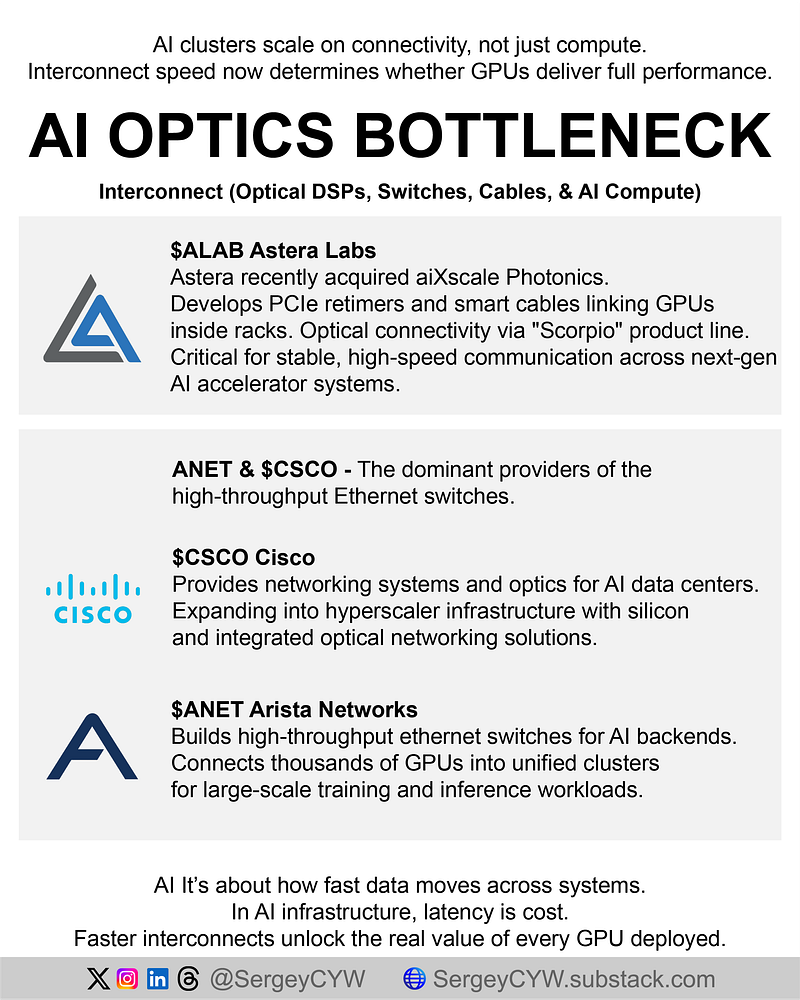

$ALAB — Astera Labs $ALAB plays more at the rack and board level, but still sits inside the interconnect bottleneck. Its PCIe retimers, smart cable modules, and switches help keep GPU connectivity clean and scalable. Q4 2025 revenue was $270.6M, and the Aries PCIe 6 retimer portfolio grew 70% year over year. The newer Scorpio line already accounts for more than 15% of total revenue, driven by PCIe Gen6 switching demand.

$AVGO — Broadcom $AVGO is arguably one of the most powerful infrastructure names in AI because it spans switching silicon, custom AI chips, and optical routing technologies. In fiscal Q1 2026, AI semiconductor revenue alone hit $8.4B, and adjusted EBITDA margin was 68%. Management has publicly pointed to a path toward more than $100B in AI chip revenue by 2027, which keeps Broadcom at the center of hyperscaler-scale buildouts.

$ANET — Arista Networks $ANET remains a top name in AI backend Ethernet switching. In Q4 2025, net income exceeded $1B for the first time, and cloud plus AI data center products made up 65% of the company’s $9B annual revenue base. Arista deployed 800G Ethernet to more than 100 customers during the year, and cumulative port shipments moved above 150M. AI cluster networking is now a core growth pillar.

$CSCO — Cisco $CSCO is becoming more relevant in hyperscale AI through specialized silicon and optics. Fiscal Q2 2026 revenue was $15.3B, while AI infrastructure orders from hyperscalers alone reached $2.1B in the quarter, matching the full prior fiscal year. Management described the AI product mix as roughly 60% systems / 40% optics and expects to recognize more than $3B in hyperscaler AI infrastructure revenue in fiscal 2026.

$NVDA — Nvidia $NVDA is not just the GPU leader. It is increasingly defining the architecture of the full AI data center, including switching and interconnect. In fiscal Q4 2026, networking revenue reached $11B for the quarter and more than $31B for the year, about 10x the level from the year Mellanox was acquired. Spectrum-X is becoming a major Ethernet fabric for AI factories, especially as enterprises move beyond isolated clusters.