Make money doing the work you believe in

AI Memory Stocks Are Crushing the Market

The bottleneck is spreading across DRAM, NAND, SSD controllers, HDDs, advanced packaging, process control, server racks, and wafer fab equipment.

Every layer of the stack is being repriced as AI workloads demand more bandwidth, more capacity, and faster data movement.

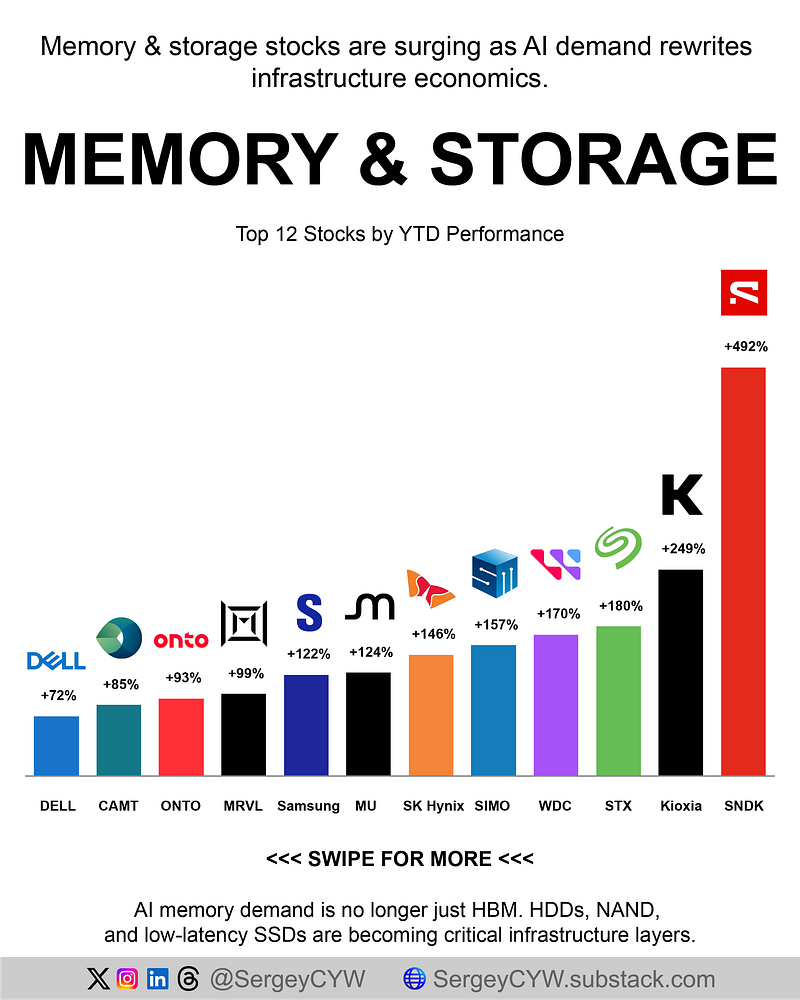

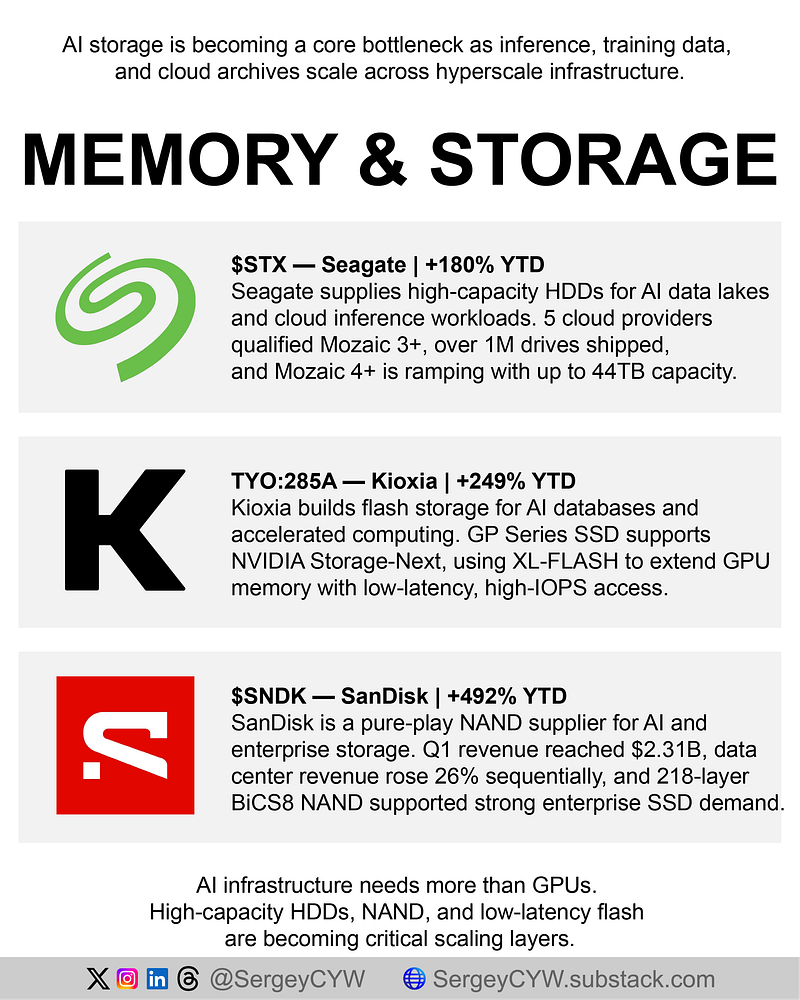

Memory and storage stocks YTD performance 👇

$SNDK — SanDisk | +492% YTD

SanDisk is a pure-play NAND flash supplier positioned around enterprise SSD demand for AI clusters. Since the WDC spin-off, the company has leaned into fast-retrieval storage for high-performance computing. Data center revenue rose 26% sequentially in Q1, helped by constrained enterprise SSD supply and strong pricing. Its 218-layer BiCS8 3D NAND gives hyperscalers higher bit density and better energy efficiency.

Kioxia — Kioxia | +249% YTD

Kioxia is pushing flash deeper into accelerated computing. Its GP Series SSD is designed to let GPUs access high-speed flash as an extension of expensive HBM. The product supports NVIDIA Storage-Next and uses XL-FLASH for low-latency, high-IOPS access. For AI databases and retrieval-heavy workloads, Kioxia is trying to make storage behave more like a memory expansion layer.

$STX — Seagate | +180% YTD

Seagate anchors the physical storage layer for AI data centers through extreme-capacity nearline HDDs. Its Mozaic 3+ platform has now been qualified by five global cloud service providers, with more than 1M drives shipped during the quarter. Management also started early volume ramp for Mozaic 4+, reaching up to 44TB per drive with minimal bill-of-material changes. More capacity, better economics.

$WDC — Western Digital | +170% YTD

Western Digital supplies high-capacity drives used to store AI datasets, inference outputs, and cloud archives. The company is scaling mass production of 40TB HAMR drives built for hyperscale storage needs. Management expects agentic AI workloads to accelerate exabyte demand because inference creates more persistent data. Non-GAAP EPS reached $2.72, showing storage leverage is becoming visible in earnings.

$SIMO — Silicon Motion | +157% YTD

Silicon Motion provides the controllers that manage data flow between NAND flash and computing systems. Its PCIe Gen 5 controllers are increasingly relevant for AI PCs and enterprise servers needing faster storage access. Q1 marked the strongest quarter in company history, while embedded eMMC and UFS products grew over 140% YoY. Client SSD controller sales rose 45%, helped by AI-focused controller demand.

SK Hynix — SK Hynix | +145% YTD

SK Hynix remains the key HBM supplier for AI accelerators, with leadership built around yields, technical execution, and customer access. Q1 operating profit margin reached 72%, while standard DRAM contract pricing spiked 83% QoQ. Management highlighted growing demand from agentic AI systems across conventional server DRAM and high-speed enterprise SSDs. DRAM shipments are expected to rise high single digits in Q2.

$MU — Micron | +124% YTD

Micron is one of the core DRAM, NAND, and HBM suppliers for AI infrastructure. Its 2026 HBM capacity is already sold out, with long-term customer commitments supporting visibility. Management is expanding around HBM4 and next-gen 1-gamma DRAM while planning roughly $20B in capex. Tight supply across DRAM and NAND may persist through 2026, giving Micron room to improve margins as demand stays elevated.

Samsung — Samsung Electronics | +122% YTD

Samsung supplies foundational DRAM and HBM stacks used across AI servers. The company is benefiting from intense shortages in standard memory, with DRAM pricing rising 51% sequentially. Operating profit surged nearly 6x YoY, driven by memory recovery and AI data center demand. Samsung expects server memory demand to stay strong through the back half of 2026, while HBM revenue is expected to triple.

$MRVL — Marvell | +99% YTD

Marvell connects compute and memory through custom silicon, interconnects, and multi-die packaging. Its data center division reached $1.44B in Q1 revenue, making AI infrastructure the core growth engine. The company introduced a multi-die platform using proprietary interposer technology to improve die-to-die connectivity and power efficiency. More AI accelerators means more demand for custom data movement.

$ONTO — Onto Innovation | +93% YTD

Onto Innovation provides inspection, process control, and metrology tools used in HBM and advanced logic production. Its Dragonfly G5 platform was qualified at leading logic and HBM customers, supporting advanced-node momentum. Advanced-node revenue grew 13%, while Q2 revenue guidance of $320M to $330M implies further acceleration. More HBM complexity means more inspection steps, and ONTO sits directly in that workflow.

$CAMT — Camtek | +85% YTD

Camtek supports AI memory manufacturing through inspection and metrology for chiplet packaging and HBM assembly. Q1 orders from leading OSAT customers exceeded $90M, including a $31M multi-system order for CoWoS-like advanced packaging applications. Management expects Hawk and Eagle Gen 5 systems to represent at least 50% of 2026 system shipments. HBM4 complexity keeps increasing the need for defect detection.

$DELL — Dell Technologies | +72% YTD

Dell sits at the AI infrastructure integration layer, combining GPUs, massive memory, and shared storage inside optimized server racks. PowerEdge AI server orders nearly doubled during Q1, while server and networking revenue reached $6.5B. Dell also introduced a context memory storage platform using NVIDIA technology to offload key-value cache from GPU memory to shared storage. Longer AI context needs better memory architecture.

$LRCX — Lam Research | +61% YTD

Lam Research enables the memory supply chain through etching and deposition tools used in DRAM, NAND, HBM, and 3D chip integration. DRAM tools represented 16% of systems revenue, while AI demand pushed management to raise its 2026 wafer fabrication equipment outlook to $140B. Lam also sees over $40B in NAND upgrade investment needed before 2027 as producers move toward 200-plus layer technology.