Make money doing the work you believe in

Top 5 Memory and Storage Companies Sitting Under the AI Data Center Buildout

Every AI model needs more than compute. Training, inference, RAG, and agentic AI all depend on memory and storage scaling.

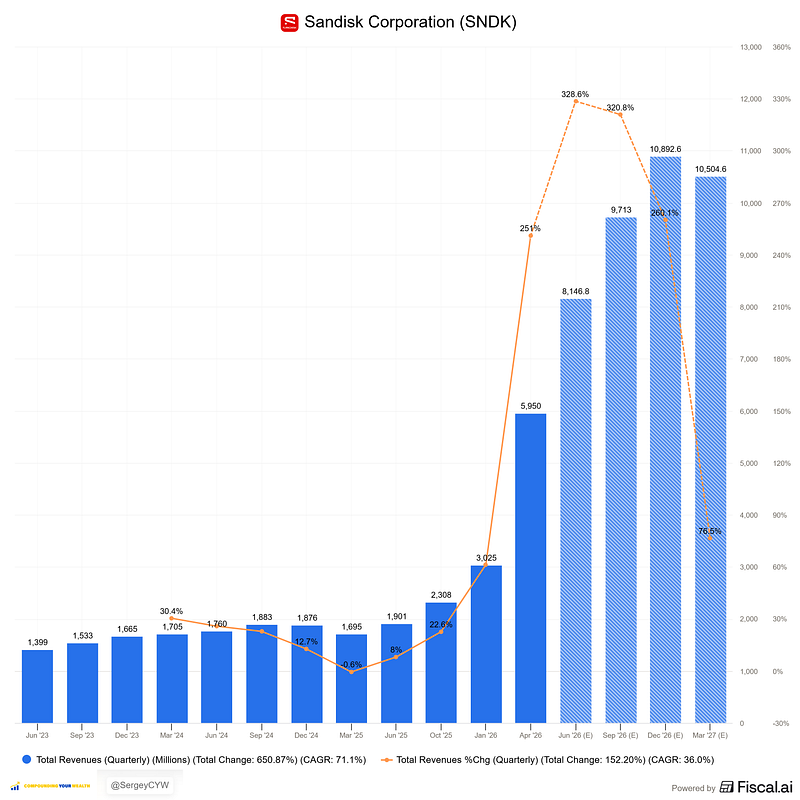

$SNDK — SanDisk | +464% YTD

$SNDK is the pure-play NAND flash angle on AI inference. Its role is low-latency storage close to compute for KV-cache, RAG, and retrieval-heavy workloads. Q3 brought three long-term contracts representing $42B in minimum contractual revenue and $11B+ in guarantees. The thesis is a shift from spot NAND volatility to contracted AI infrastructure supply.

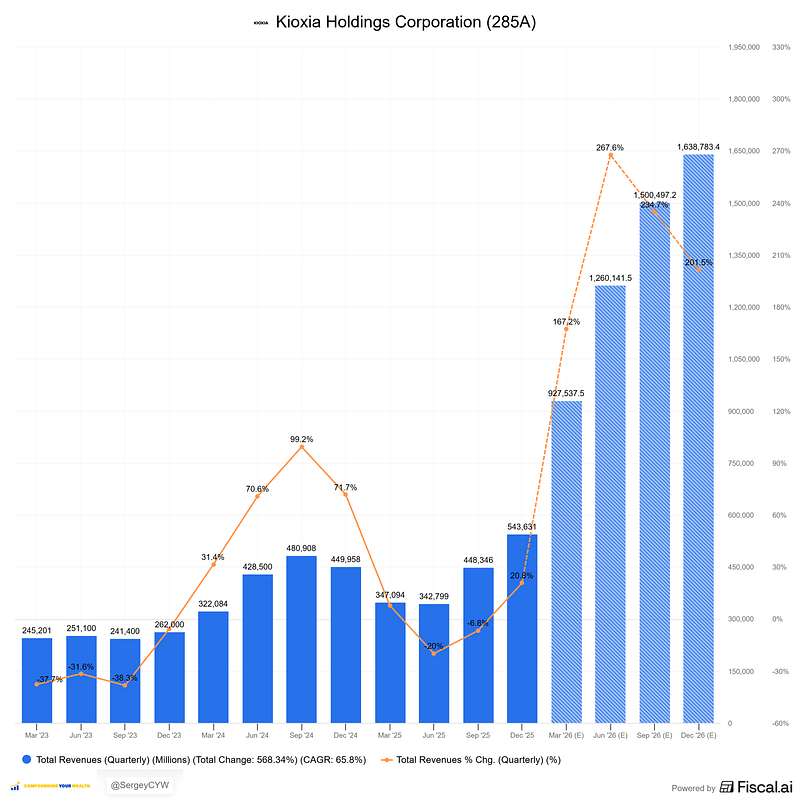

Kioxia $285A.T | +249% YTD

Kioxia is the GPU-direct flash storage play. At NVIDIA GTC 2026, it announced the GP Series for Storage-Next architecture: 100M+ IOPS, XL-FLASH Storage Class Memory, and 512-byte access granularity. Role in AI: extend HBM with high-capacity flash near the GPU. If Storage-Next becomes standard, Kioxia moves from NAND vendor to memory-tier architecture supplier.

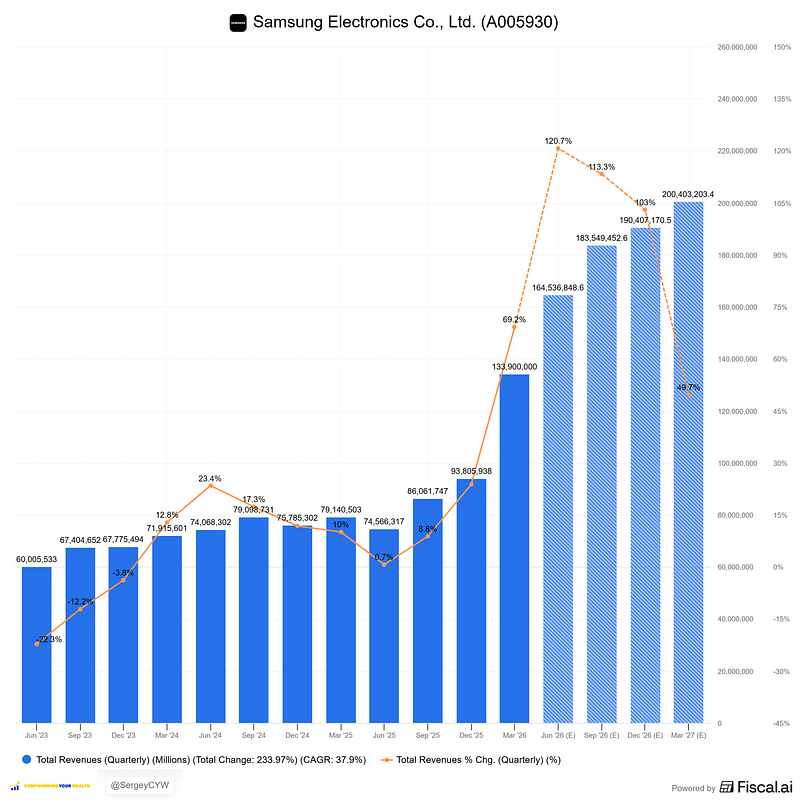

Samsung $005930.KS | +122% YTD

Samsung is the full-stack memory incumbent: DRAM, HBM, NAND, enterprise SSDs, and PCIe Gen6 eSSD for KV-cache storage. Its AI role spans HBM4 for Vera Rubin, SOCAMM2 for CPU platforms, and server DRAM procurement tied to LLM services. HBM4 needs 2–3x more wafer area than HBM3E, tightening capacity and supporting premium memory pricing.

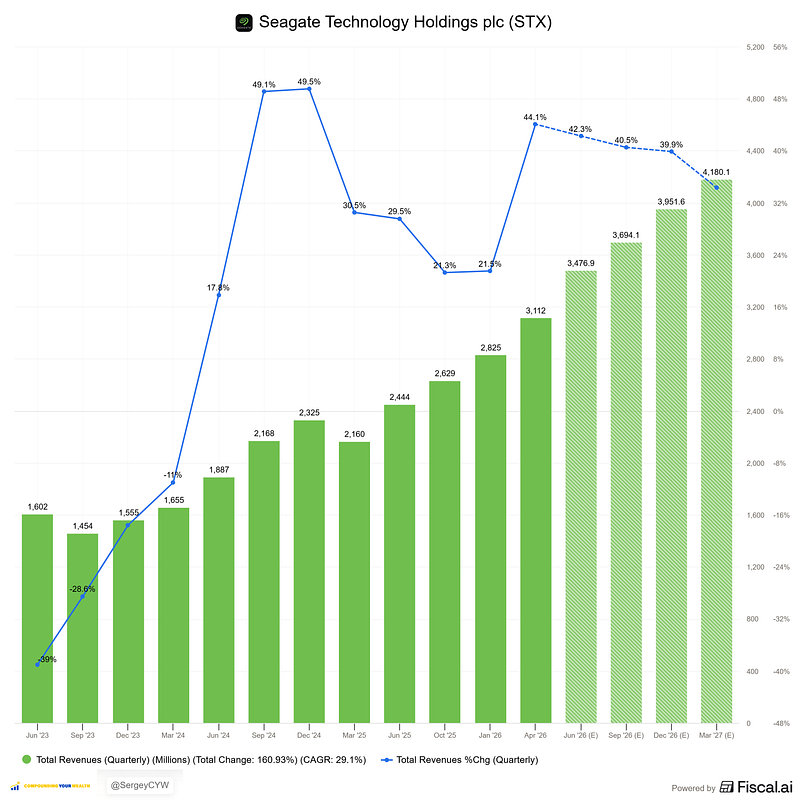

$STX — Seagate | +178% YTD

$STX owns the cold-storage layer of AI. Models need historical datasets, logs, training corpora, and inference records stored at exabyte scale. Near-line HDD remains the cost-effective medium. Mozaic HAMR 32TB+ drives received qualification from five major cloud providers. Each HAMR generation roughly doubles areal density, helping data centers add capacity without adding footprint.

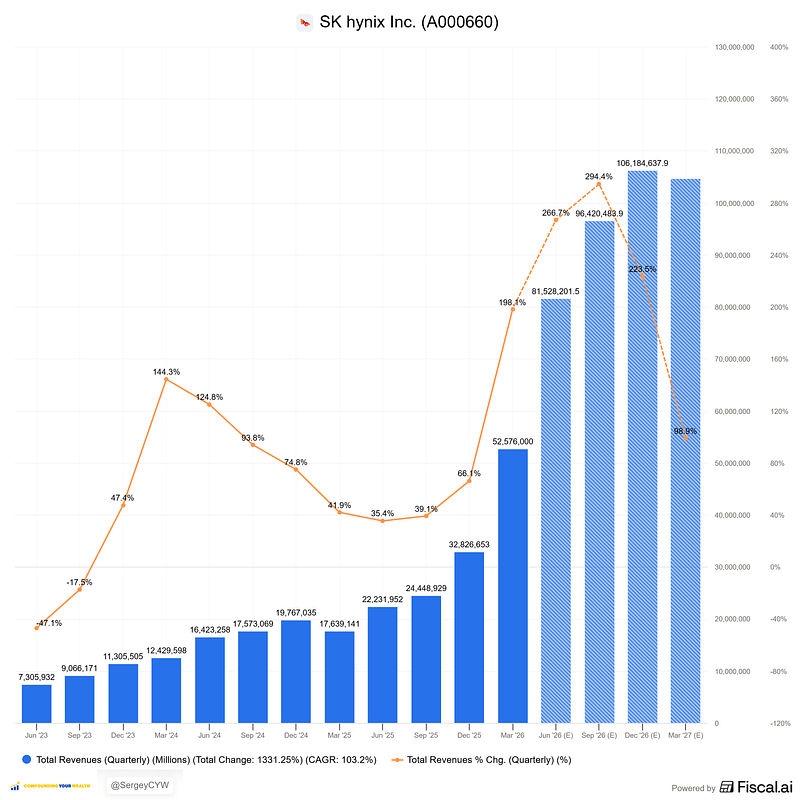

SK Hynix $000660.KS | +146% YTD

SK Hynix is the HBM leader. It controls 57% of global HBM market share and remains Nvidia’s primary supplier across major GPU platforms. HBM3E is shipping at scale, while HBM4 qualification for Vera Rubin is on track for H2 2026. AI chip demand exceeds capacity. Agentic AI increases memory bandwidth per inference task, expanding Hynix content per server.