Make money doing the work you believe in

After interviewing multiple AI/ML engineers, I have noticed that many still have a lot of confusion on how exactly to reduce the latency for both machine learning models and LLMs.

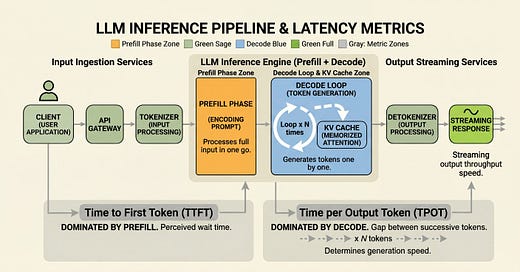

The techniques are well-known. The diagnosis is missing. Quantization won't help if your bottleneck is prefill. Caching won't help if your bottleneck is decode. Most teams burn weeks on the wrong optimization because they skipped that step.

This post walks through ten techniques across ML and LLM serving, organized by which phase of the pipeline they actually fix. So you know which lever to pull when.

May 7

at

1:20 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.