Make money doing the work you believe in

From Math Engine to Real-World Tool: Week 6 Update

This week marked a turning point in our project — not in terms of technology, but in terms of purpose.

Rethinking What We Built

We began with a system that was technically solid. Our earlier work focused on geometric transformations: objects rotating in space, multiple synchronized views, gesture-based interaction, and even AR visualization. It was visually impressive and functionally rich.

But there was a lingering question:

“What is this actually solving?”

That question pushed us to step back and look at our system differently. Instead of asking what more features we could add, we asked:

“Where can this engine actually be useful?”

The Shift in Perspective

The breakthrough came when we realized that everything we had built — transformations, multiple viewpoints, spatial interaction — naturally aligns with real-world spatial planning problems.

One such problem stood out: security camera placement.

At its core, camera planning involves:

Understanding field of view

Positioning devices in 3D space

Identifying blind spots

Optimizing coverage

In other words, it’s the same math we were visualizing earlier — just applied to something practical.

That realization led us to pivot the project.

Building a Usable System

We started simple.

The first iteration focused on a 2D layout, where cameras could be placed and their coverage areas visualized. This immediately made the system more understandable — instead of abstract shapes, we now had a clear use case.

However, the limitation was obvious:

2D planning doesn’t fully capture real-world complexity.

So the next step was to bring back our strongest capability — 3D visualization.

Integrating 3D and Multi-View

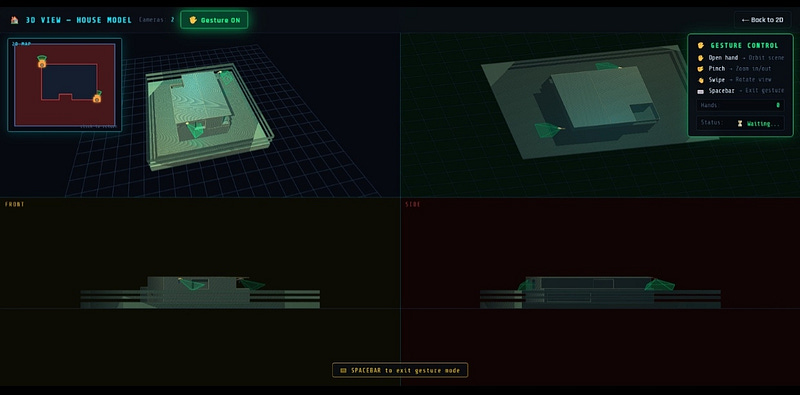

Using our existing rendering pipeline, we introduced a 3D house model and adapted our earlier multi-viewport system.

Now the same scene could be viewed from:

a perspective view for realism

a top view for layout clarity

front and side views for alignment and height understanding

This combination turned out to be powerful. It allowed us to validate camera placement from multiple angles simultaneously — something that felt both intuitive and practical.

Keeping Interaction Natural

One feature we deliberately carried forward was gesture control.

While it originally served as an experimental input method, it proved surprisingly useful in this context. Navigating a 3D environment using hand movements made the system feel more interactive, especially during demonstrations.

Rather than feeling like an added gimmick, it now supports the overall experience of exploring and analyzing space.