The app for independent voices

Everyone’s debating Claude vs. GPT vs. Gemini.

Meanwhile, Inception Labs just quietly rewrote the rules of the game entirely.

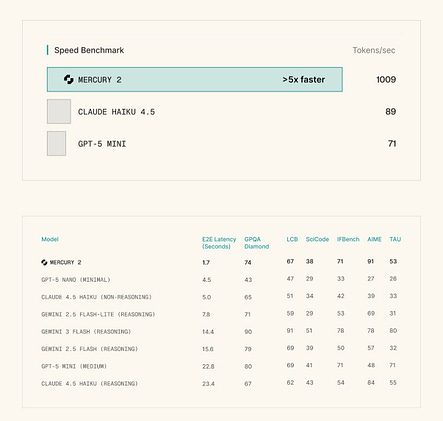

Three professors from Stanford, Cornell, and UCLA just dropped Mercury 2 — the world’s first reasoning diffusion language model. And the numbers are kind of wild.

1,009 tokens per second.

Compare that to Claude Haiku 4.5 with reasoning at ~89 tokens/sec. Or GPT-5 Mini at ~71 tokens/sec. That’s not an incremental improvement. That’s a different category.

Here’s what makes this more than a speed flex:

The architecture is fundamentally different. Every major LLM you use today generates text one token at a time, left to right, sequentially. Mercury 2 generates entire blocks in parallel, refining them like an editor reworking a whole draft — not a typist adding one word at a time.

The price gap is just as striking. Mercury 2 runs at $0.25 per million input tokens and $0.75 per million output. That’s 4x cheaper than Gemini Flash on output and ~6x cheaper than Claude Haiku on output.

They’re not at the frontier yet. On reasoning benchmarks, Mercury 2 is competitive with Haiku and GPT-5 Mini — not Claude Opus or Gemini Pro. But that’s not the point.