The app for independent voices

Bro, your recent notebooks used a library that could be compromised.

I woke up to this message from a friend. I’d shared some Python notebooks previously that used this ubiquitous library called litellm that had two version compromised (more in the article below).

So I spent the first half an hour scrubbing the code, then releasing a new version. And sending a chat message on this vulnerability.

But I realised that my notebooks were probably the least of many folks’ worries. litellm is not just used directly, but a dependency of many widely used libraries such as Dspy, and many more.

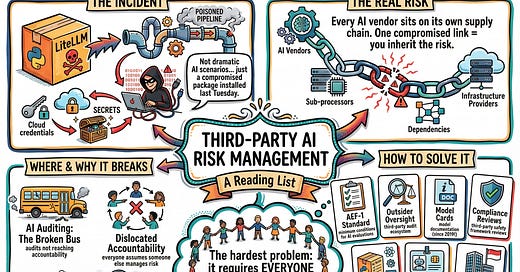

It’s the perfect example of third party AI risk that has nothing to do with all the dramatic scenarios we imagine - collusion, rogue AI, coordinated manipulation. Sometimes it's a simple file in a package you installed last Tuesday.

I’d drafted a reading list on third-party AI risk management a few weeks ago when MAS third party risk management guidelines came out. But I've been sitting on it. Until now.

Have a read and let me know what's your biggest third-party AI challenge right now?