The app for independent voices

If you feel you are being cheated, you are not alone.

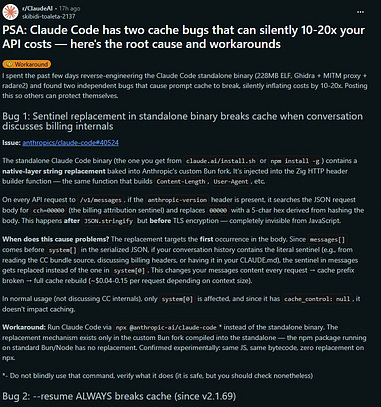

There's been talk of Anthropic reducing limits on Claude. But aside from that deliberate reduction, seems like there are two bugs that are taking it to the extreme. Which have been confirmed by two Anthropic engineers on X.

Two lessons for me though.

First, it demonstrates the crazy utility of caching. First bug is basically caused by the replacement of a simple key in the message, which breaks caching due to a key mismatch. Second bug is a simple shift in the position of parts of the structure of messages sent to the server, which causes past cache relating to session context to be ignored, and rebuilt from scratch. Cache breaking contributes to a spike of ~10-20x in token consumption.

Second, it made me realise that there's some utility to always testing other models. Aside from Claude Code, I also use OpenAI's Codex and Moonshot's Kimi-Code. If Claude Code is my star worker, then Codex has been my star reviewer, while Kimi-Code is the new worker I am onboarding. But in times like these, switching to either Codex or Kimi-Code is easier now as I kind of know their quirks and have built some workflow around them.

#Claude#Anthropic#Bug