The app for independent voices

Alfred is now training small language models to help build itself an associative memory.

Alfred now:

- processes every new conversation and file at regular time intervals into the vault strictly following the ontology

- can just take a braindump audio, video or text and file it properly into the vault

- scans the vault regularly for ontology violations, duplicates, empty stubs and broken links and fixes them automatically

- actively looks for hidden relationships and links unlinked entities- automatically enriches vault entries using web search

- regularly reruns the clustering workflow on the DGX Spark (switched to nomic-embed-text and HDBSCAN) so → embed the full vault text → run HDBSCAN on vector data to reveal clusters → label clusters with Qwen 2.5 7B running locally → label relationships and relationship types (also Qwen)

The Palantir based ontology gives me structure for nodes. Relationship typing is not entirely explicit, there's room for emergent ontology. (health scanner will fix violations anyway).

This means that it now doesn't matter what I throw at Alfred, the information will be parsed and filed in the right category and over time everything will be more interconnected semantically without me having to lift a finger.

Now that Alfred has a proper brain, I need to be able to query it. My hunch is after reading some work from Distil Labs that I could train a small language model (like Qwen 3 0.6B) on the ontology schema and not the content to outperform RAG in <100ms.

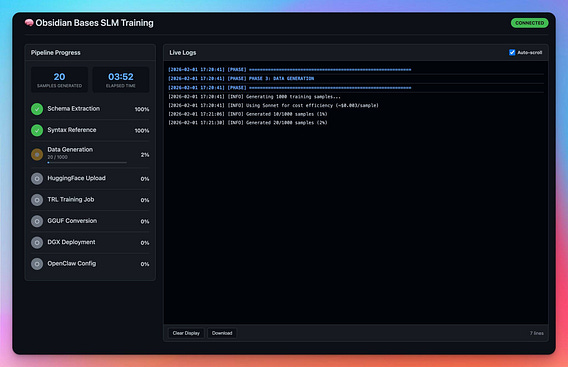

So here's what Alfred is doing right now:

1. Using Opus 4.5 and Sonnet 4.5 subagents to generate 1000 pairs of natural language prompt and Obsidian Base syntax responses.

2. Submit TRL job with LoRA fine-tuning on HF Jobs (a10g-large)

3. Convert to Q4_K_M quantization for Ollama

4. Once done, deploy on DGX Spark

5. Add alias on OpenClaw for future use.

6. Add a simple python script that sends every prompt to the small model and get Obsidian Base syntax responses. the script will validate and rerun if incorrect.

the use case for Alfred is that in every prompt when I hit submit, Alfred intercepts the prompt, first runs the python script which returns the Obsidian filenames in Base syntax of the contextually relevant parts of the Vault in <100ms.

Then we inject the filenames into the prompt and send that to the Alfred agent.

Rebuilding context on the fly at every prompt faster than lightning. This will give Alfred associative memory.

If retrieval is accurate of course (In theory it should be at least ~70% of the time).