The app for independent voices

> It objects to chaining many assumptions, each of which has a certain probability of failure, or at least of taking a very long time. [...] The problem with this is that it’s hard to make the probabilities work out in a way that doesn’t leave at least a 5-10% chance on the full nightmare scenario happening in the next decade.

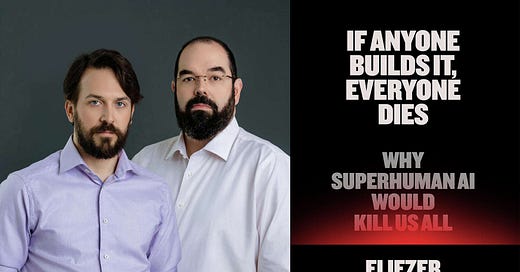

I find this an underrated problem with all "predict the future" scenarios which have to deal with multiple contingent things happening, especially in an adversarial environment. In the case of IABIED, it only works if you agree that extremely fast recursive self-improvement will happen, which is a very strong assumption, and hence requires a "magic algorithm to get to godhood" as the book posits.. Also remembered doing this to check this intuition: strangeloopcanon.com/p/…