The app for independent voices

How Cache Stampedes Happen

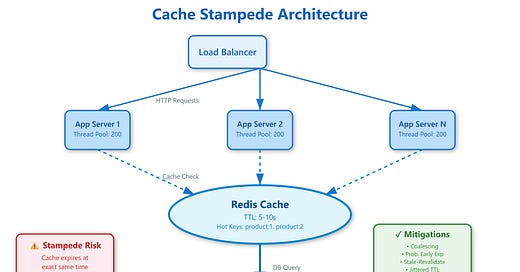

A cache stampede occurs when a popular cache key expires and multiple requests simultaneously discover it’s missing. Each request assumes it should regenerate the cached value, triggering a wave of identical expensive operations.

The mechanism is deceptively simple. Request A checks cache—miss. Request B checks cache 2ms later—miss. Request C at 5ms—miss. All three now query the database, compute results, and write back to cache. With high traffic, this multiplies into hundreds or thousands of concurrent backend hits.

The worst stampedes happen on your most important keys. A homepage cache serving 50,000 RPS expires. Within 20ms, 1,000 requests slam your database. Even if each query takes only 100ms, you’ve just created 100 seconds of total database work from what should have been a single cache refresh.

The problem compounds because slow responses cause client retries. Original request times out at 5 seconds. Client retries. Now you have double the load. Request queues build up in your application servers, consuming memory and connections. The system enters a degraded state where cache misses trigger more misses.