The app for independent voices

Load Shedding and Request Prioritization: Keeping Critical Flows Alive During Outages

Introduction

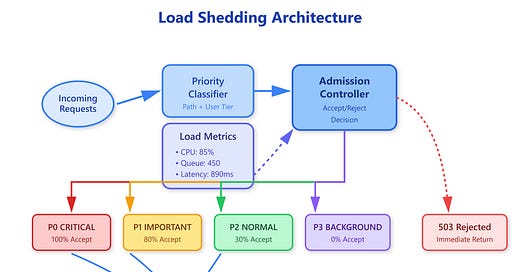

Your payment processing service is drowning. A bot attack floods your API with 50,000 requests per second—ten times your normal traffic. Meanwhile, legitimate users trying to complete checkouts are timing out. Your database connections are exhausted, CPU is pegged at 100%, and response times have degraded from 50ms to 8 seconds. The traditional approach—accepting all requests and letting everything fail slowly—creates cascading failures across dependent services. Load shedding is the counterintuitive solution: deliberately reject low-priority requests so critical operations survive.

Apr 7

at

11:35 AM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.