The app for independent voices

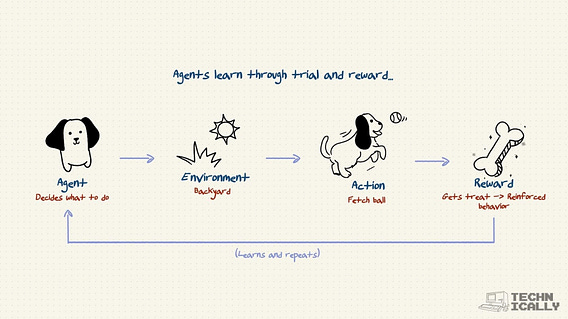

RLHF is like training a dog to fetch:

You don't explain physics. You give treats.

The dog figures out: ball comes back = treat.

AI figures out: helpful answer = high score from the reward model.

Neither understands why it works. Both learn what works.

Feb 11

at

8:00 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.