The app for independent voices

𝗪𝗵𝗮𝘁 𝗜𝘀 𝗠𝗼𝗱𝗲𝗹 𝗖𝗼𝗻𝘁𝗲𝘅𝘁 𝗣𝗿𝗼𝘁𝗼𝗰𝗼𝗹 (𝗠𝗖𝗣)?

Every AI integration starts the same way: custom connectors, glue code, fragile scripts. Connect to Slack? Build an adapter. Query a database? Another adapter. Access files? You get the idea.

This doesn't scale.

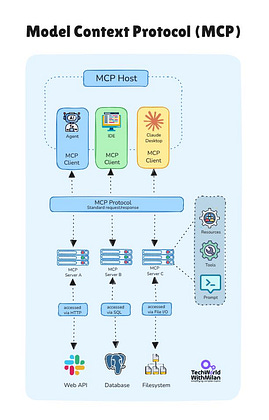

𝗠𝗖𝗣 is an open standard from Anthropic that defines how AI models connect to external systems, databases, APIs, file systems, and SaaS tools. One protocol instead of dozens of custom integrations.

Here's why it matters:

𝟭. 𝗥𝘂𝗻𝘁𝗶𝗺𝗲 𝗗𝗶𝘀𝗰𝗼𝘃𝗲𝗿𝘆

REST APIs require new client code when endpoints change. MCP servers expose capabilities dynamically via tools/list. The AI queries what's available and adapts. No SDK regeneration.

𝟮. 𝗗𝗲𝘁𝗲𝗿𝗺𝗶𝗻𝗶𝘀𝘁𝗶𝗰 𝗘𝘅𝗲𝗰𝘂𝘁𝗶𝗼𝗻

Traditional approach: LLM generates HTTP requests. Result: hallucinated paths, wrong parameters. With the MCP approach, LLM picks which tool to call, then 𝘄𝗿𝗮𝗽𝗽𝗲𝗱 𝗰𝗼𝗱𝗲 𝗲𝘅𝗲𝗰𝘂𝘁𝗲𝘀 𝗱𝗲𝘁𝗲𝗿𝗺𝗶𝗻𝗶𝘀𝘁𝗶𝗰𝗮𝗹𝗹𝘆. You can test, validate inputs, and handle errors in actual code.

𝟯. 𝗕𝗶𝗱𝗶𝗿𝗲𝗰𝘁𝗶𝗼𝗻𝗮𝗹 𝗖𝗼𝗺𝗺𝘂𝗻𝗶𝗰𝗮𝘁𝗶𝗼𝗻

Servers can request LLM completions, ask users for input, and push progress notifications. Not bolted on, it's core to the protocol.

𝟰. 𝗦𝗶𝗻𝗴𝗹𝗲 𝗜𝗻𝗽𝘂𝘁 𝗦𝗰𝗵𝗲𝗺𝗮

REST scatters data across paths, headers, query params, and body. MCP mandates one JSON input/output per tool. Predictable structure every time.

𝟱. 𝗟𝗼𝗰𝗮𝗹-𝗙𝗶𝗿𝘀𝘁 𝗗𝗲𝘀𝗶𝗴𝗻

MCP runs over stdio for local tools. No port binding, no CORS configuration. When servers run locally, they inherit host process permissions, direct filesystem access, terminal commands, and system operations.

OpenAI, Microsoft, and Google now support MCP. Cursor, Windsurf, and Claude Desktop use it natively. Zapier exposed 𝟴,𝟬𝟬𝟬+ 𝗮𝗽𝗽𝘀 through a single MCP endpoint. Developers built servers for Blender, Figma, GitHub, Postgres, and dozens more.

𝗧𝗵𝗲 𝗰𝗮𝘁𝗰𝗵: 𝘀𝗲𝗰𝘂𝗿𝗶𝘁𝘆. MCP doesn't enforce authentication at the protocol level. No standardized permission model. Tool safety depends on your server implementation. Input validation, access control, and audit logging are on you.

MCP isn't replacing APIs. Most servers wrap existing REST endpoints. You keep your infrastructure while adding an AI-friendly layer on top.

For engineers building AI features, MCP solves the 𝗡×𝗠 𝗶𝗻𝘁𝗲𝗴𝗿𝗮𝘁𝗶𝗼𝗻 𝗽𝗿𝗼𝗯𝗹𝗲𝗺. Instead of connecting N tools to M models separately, implement one protocol. The ecosystem handles the rest.