The app for independent voices

𝗪𝗵𝘆 𝗡𝗲𝘁𝗳𝗹𝗶𝘅 𝗱𝗲𝗰𝗶𝗱𝗲𝗱 𝘁𝗼 𝗮𝗯𝗮𝗻𝗱𝗼𝗻 𝘁𝗵𝗲 𝗖𝗤𝗥𝗦 𝗮𝗽𝗽𝗿𝗼𝗮𝗰𝗵

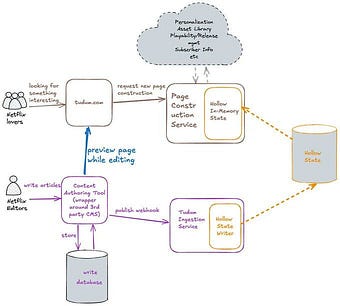

Netflix launched 𝗧𝘂𝗱𝘂𝗺 in late 2021 as its official fan website, a destination for exclusive interviews, behind-the-scenes content, and show-related material.

The team built it using CQRS to optimize read performance for serving content to fans.

The write path utilized a third-party CMS with a dedicated ingestion service that handled content updates via webhooks.

This service transformed CMS data into a read-optimized format and published it to Kafka.

But their CQRS implementation couldn't deliver the read-after-write consistency their editorial workflow required.

Tudum launched with standard CQRS separation:

Write path: third-party CMS with webhook-driven ingestion service.

Read path: Kafka → Cassandra → Page Data Service with near cache → Page Construction Service.

The ingestion pipeline transformed CMS data into read-optimized format (CDN URLs instead of asset IDs, hydrated movie metadata instead of placeholders). Kafka decoupled writes from reads, and Cassandra provided the query store.

But they have 𝗮 𝗰𝗼𝗻𝘀𝗶𝘀𝘁𝗲𝗻𝗰𝘆 𝗽𝗿𝗼𝗯𝗹𝗲𝗺. Editorial previews required strong read-after-write consistency, but the architecture delivered eventual consistency with significant delays:

1. CMS webhook triggers the ingestion service

2. Ingestion service queries CMS APIs, validates, transforms, and produces to Kafka

3. Data Service Consumer processes Kafka message, writes to Cassandra

4. Near cache refreshes on 60-second cycle

With N keys and 60-second refresh intervals, the cache is updated one key per second. Growing content made this issue worse, and preview delays stretched to over 30 seconds.

So, Netflix replaced Kafka, Cassandra, and a cache layer with 𝗥𝗔𝗪 𝗛𝗼𝗹𝗹𝗼𝘄, their in-memory object database.

Key characteristics:

✅ Entire dataset is distributed across the application cluster memory

✅ Compression reduces the memory footprint to 25% of the uncompressed size

✅ Eventual consistency by default, strong consistency per-request option

✅ O(1) synchronous data access, no I/O per request

This enabled them to 𝗮𝗰𝗵𝗶𝗲𝘃𝗲 𝘀𝗶𝗴𝗻𝘁𝗶𝗳𝗶𝗰𝗮𝗻𝘁 𝗽𝗲𝗿𝗳𝗼𝗿𝗺𝗮𝗻𝗰𝗲 𝗶𝗺𝗽𝗿𝗼𝘃𝗲𝗺𝗲𝗻𝘁𝘀, such as reducing editorial previews from minutes to seconds and page construction from 1.4 seconds to 0.4 seconds.

CQRS optimizes for scale and separation of concerns. However, when your dataset fits in memory, and you require strong consistency, simpler approaches often prove more effective.

The "right" pattern depends on your specific constraints. Netflix optimized for editorial workflow, rather than theoretical scalability limits they hadn't yet reached.

Architecture decisions should align with actual requirements, not anticipated ones.

Image: Netflix