The app for independent voices

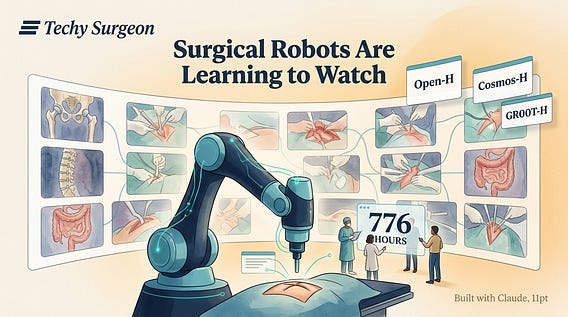

NVIDIA just open-sourced 776 hours of surgical video — and it's not for streaming.

At GTC 2026, NVIDIA launched the first domain-specific physical AI platform for healthcare robotics, anchored by three releases:

Open-H — the largest healthcare robotics dataset ever, with 776+ hours of annotated surgical video.

Cosmos-H — generates physically accurate synthetic surgical data for training robots on procedures they haven't yet observed.

GR00T-H — a vision-language-action model that lets robots understand text commands and translate them into precise physical motion. CMR Surgical, Johnson & Johnson MedTech, Moon Surgical, and Rob Surgical are already adopting the toolkit. The shift here is conceptual: surgical robots are moving from remote-controlled instruments to systems that learn from watching procedures — the same way residents do. The infrastructure layer for surgical AI just became public. As an orthopedic surgeon, the question that keeps me up at night isn't whether these tools will work. It's whether the training data represents the full diversity of anatomy, pathology, and surgical approaches.

Surgeons: would you contribute your OR video to an open dataset if it advanced the field? What would you need to see first? →