The app for independent voices

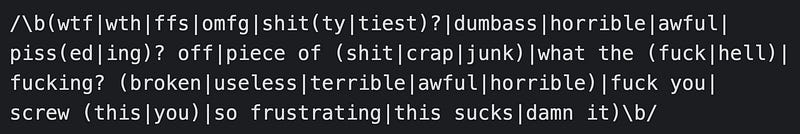

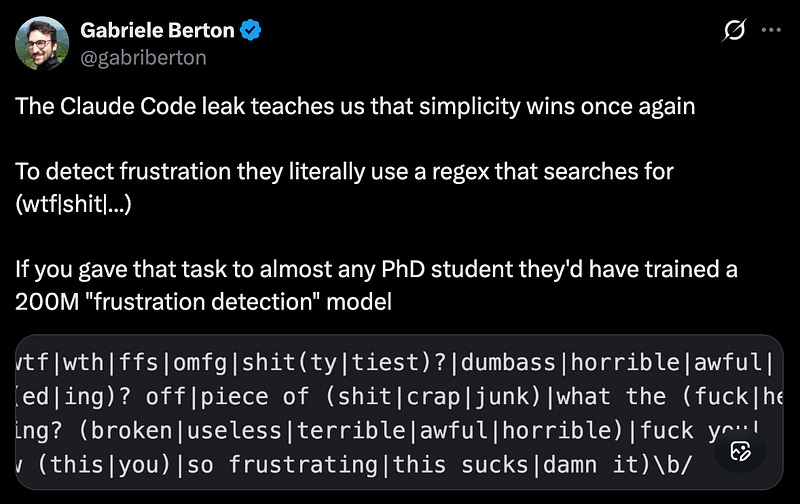

This was pretty funny to me. But it makes sense. A lot of companies use LLMs for many of their security or ethical usage detectors. The problem is each one you add, even if a small model, adds costs and latency.

A good regex effectively adds neither. Classic error handling still has its place!

Apr 7

at

1:28 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.