Make money doing the work you believe in

One of the biggest puzzles I’ve run into in the data center world: why is there widespread perception that data centers operate 24/7/365 at near-maximum demand, when their actual utilization appears significantly lower?

I spoke about this yesterday with state energy officials and regulators on a NASEO-webinar, noting how this perception of constant, peak operation can drive consequential utility planning and regulatory decisions.

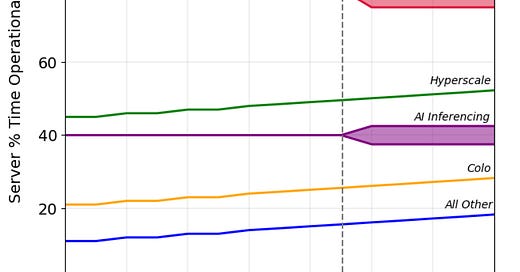

But the limited available data we have suggests many data centers appear to operate closer to 50% capacity utilization rate - a far cry from the 90-100% figures we often hear.

This gap stems in part from conflating key metrics, especially "load factor" vs. "capacity utilization." It also reflects real-world constraints: inconsistent workloads, overbuilt redundancy, hardware maintenance, and the fact that chips rarely run anywhere near their nameplate power.

Why does this matter? Because planning for near-constant peak demand can lead to overbuilding the power system, potentially locking in unnecessary costs and misallocating scarce utility resources.

I break this down in my latest piece, along with why greater transparency and better data are important for aligning data center growth with responsible power system planning: