The app for independent voices

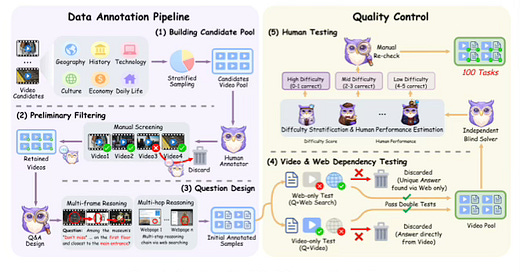

Today's paper addresses a significant limitation in current multimodal evaluations, where video question answering is typically restricted to evidence found solely within the video clip. In real-world scenarios, however, videos often provide visual cues that serve as starting points for broader information gathering. To bridge this gap, the paper introduces VideoDR, a benchmark designed to evaluate "Video Deep Research." This task requires models to identify specific visual anchors within a video and utilize them to perform iterative searches on the open web, combining internal visual evidence with external knowledge to answer complex questions.

Jan 13

at

9:16 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.