The app for independent voices

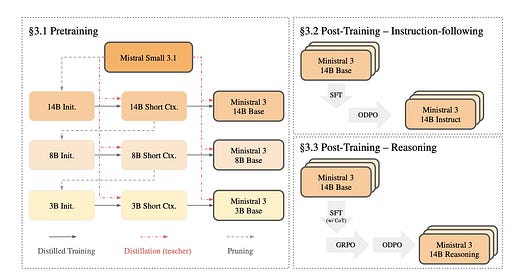

Today’s paper introduces Ministral 3, a new family of parameter-efficient dense language models designed to run effectively on hardware with limited compute and memory capacities. While many state-of-the-art models require training on massive datasets ranging from 15 to 36 trillion tokens, this paper tackles the challenge of achieving competitive performance with a significantly smaller budget of 1 to 3 trillion tokens. The resulting family consists of models in three sizes—3B, 8B, and 14B parameters—each capable of text generation, reasoning, and image understanding.

Jan 14

at

10:09 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.