The app for independent voices

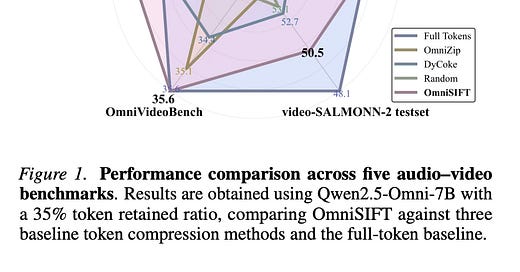

Today’s paper addresses the computational challenges of Omni-modal Large Language Models (Omni-LLMs) that process both audio and video inputs. These models generate extremely long token sequences—a typical 20-second clip can produce over 20,000 tokens—leading to substantial computational overhead. The paper introduces OmniSIFT, a modality-asymmetric token compression framework that reduces token count by up to 75% while maintaining or even improving performance on audio-visual understanding tasks.

Feb 7

at

7:43 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.