The app for independent voices

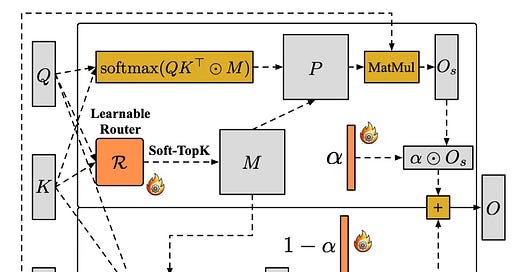

Today’s paper addresses the computational bottlenecks inherent in video diffusion models, specifically aiming to accelerate the attention mechanism which typically scales quadratically with sequence length. While prior approaches like Sparse-Linear Attention (SLA) attempted to mitigate this by combining sparse and linear attention, they relied on fixed, heuristic rules to split computation, often leading to suboptimal resource allocation and approximation errors. This paper introduces SLA2, a refined framework that replaces these heuristics with learnable components and integrates quantization strategies to significantly speed up video generation while preserving visual quality.

Feb 19

at

8:16 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.