The app for independent voices

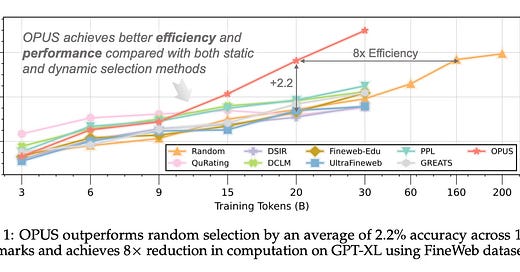

Today’s paper addresses the “Data Wall” challenge in Large Language Model (LLM) pre-training, where the supply of high-quality public text is nearing exhaustion. As the field transitions from simply scaling data volume to prioritizing data quality, selecting the most effective tokens for training becomes critical. The paper introduces OPUS, a dynamic data selection framework that aligns data selection criteria with the specific optimization dynamics of modern training algorithms, aiming to improve model performance while reducing computational costs.

Feb 21

at

7:48 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.