The app for independent voices

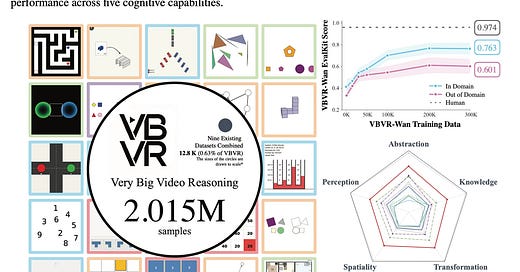

Today’s paper addresses the significant gap between visual realism and reasoning capabilities in current video generation models. While recent advancements have produced models capable of creating visually impressive content, these systems often lack the ability to understand and reason about spatiotemporal structures, such as continuity, interaction, and causality. To solve this, the paper introduces the Very Big Video Reasoning (VBVR) suite, a comprehensive resource featuring an unprecedentedly large dataset and a verifiable evaluation framework designed to systematically train and test the reasoning faculties of video models.

Feb 24

at

9:46 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.