The app for independent voices

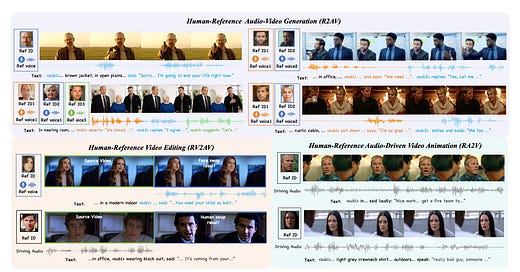

Today’s paper introduces DreamID-Omni, a unified framework designed to tackle the fragmentation in current human-centric audio-video generation. Existing approaches typically isolate tasks such as reference-based generation, video editing, and audio-driven animation, requiring separate models for each objective. Furthermore, achieving precise control over multiple character identities and their specific voice timbres simultaneously remains a difficult challenge. This work proposes a single framework that integrates these distinct capabilities, enabling consistent and controllable generation across diverse scenarios.

Feb 26

at

9:41 PM

Relevant people

Log in or sign up

Join the most interesting and insightful discussions.