Make money doing the work you believe in

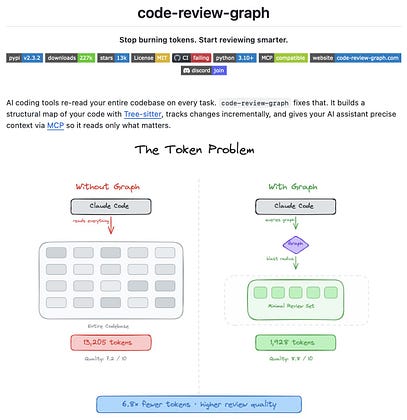

One of the highest hidden costs when using coding agents is context waste.

You ask Claude Code to review a small change, but the agent often ends up reading files that have nothing to do with the task.

In large repositories, this quickly becomes expensive. You spend tokens on context that does not improve the review, and sometimes it even makes the model less focused.

code-review-graph tries to solve this problem by building a persistent knowledge graph of your codebase.

Instead of asking the agent to scan the whole project, it first maps the structure of the repository using Tree-sitter. It extracts functions, classes, imports, dependencies, and relationships between files, then stores this graph in SQLite.

When you make a change, it does not reprocess the entire codebase. A hook runs on file save or git commit and updates only the parts that changed.

Then, during review, it traces the “blast radius” of your change. It finds the callers, dependents, related files, and tests that may be affected. Claude can then read only the files that matter instead of pulling unnecessary context into the review.

The idea is simple but very practical:

Give the coding agent better context selection before asking it to reason.

According to the project, this led to 6.8x fewer tokens in code reviews across six open-source repositories, and up to 49x fewer tokens for daily coding tasks.

It currently supports 23 languages, including Python, TypeScript, Rust, Go, Java, Vue, Solidity, and Jupyter notebooks. It also works with Claude Code, Cursor, Windsurf, and any MCP-compatible agent.

This is the kind of infrastructure I expect to become more common around coding agents: not just better models, but better systems that decide what the model should read in the first place.

100% open source.

GitHub repo: github.com/tirth8205/co…