The case for a little AI regulation

Not every safety rule represents "regulatory capture" — and even challengers are asking the government to intervene

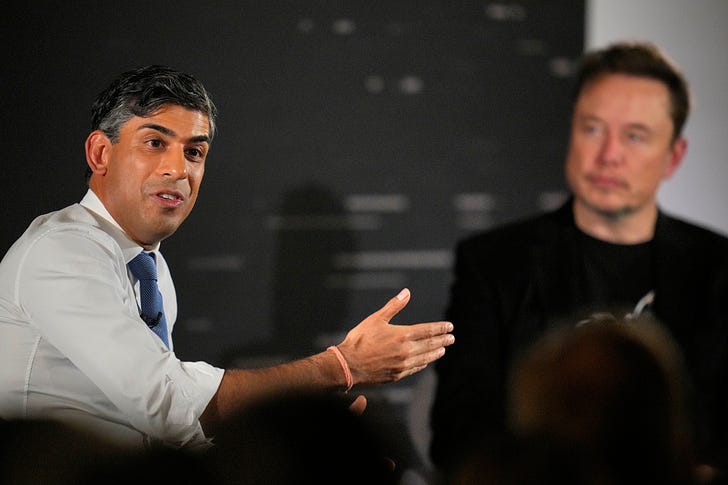

Today, as a summit about the future of artificial intelligence plays out in the United Kingdom, let’s talk about the intense debate about whether it is safer to build open-source AI systems or closed ones — and consider the argument that government attempts to regulate them will benefit only the biggest players in the space.

The AI Safety Summit in England’s Bletchley Park marks the second major government action related to the subject this week, following President Biden’s executive order on Monday. The UK’s minister of technology, Michelle Donelan, released a policy paper signed by 28 countries affirming the potential of AI to do good while calling for heightened scrutiny on next-generation large language models. Among the signatories was the United States, which also announced plans Thursday to establish a new AI safety institute under the Department of Commerce.

Despite fears that the event would devolve into far-out debates over the potential for AI to create existential risks to humanity, Bloomberg reports that attendees in closed-door sessions mostly coalesced around the idea of addressing nearer-term harms.

Here’s Thomas Seal:

Max Tegmark, a professor at the Massachusetts Institute of Technology who previously called to pause the development of powerful AI systems, said “this debate is starting to melt away.”

“Those who are concerned about existential risks, loss of control, things like that, realize that to do something about it, they have to support those who are warning about immediate harms,” he said, “to get them as allies to start putting safety standards in place.”

A focus on the potential for practical harm characterizes the approach taken by Biden’s executive order, which directs agencies to explore individual risks around weapon development, synthetic media, and algorithmic discrimination, among other harms.

And while UK Prime Minister Rishi Sunak has played up the potential for existential risk in his own remarks, so far he has taken a light-touch, business-friendly approach to regulation, reports the Washington Post. Like the United States, the UK is launching an AI safety institute of its own. “The Institute will carefully test new types of frontier AI before and after they are released to address the potentially harmful capabilities of AI models,” the British Embassy told me in an email, “including exploring all the risks, from social harms like bias and misinformation, to the most unlikely but extreme risk, such as humanity losing control of AI completely.”

Near the summit’s end, most of the major US AI companies — including OpenAI, Google DeepMind, Anthropic, Amazon, Microsoft and Meta — signed a non-binding agreement to let governments test their models for safety risks before releasing them publicly.

Writing about the Biden executive order on Monday, I argued that the industry was so far being regulated gently, and on its own terms.

I soon learned, however, that many people in the industry — along with some of their peers in academia — do not agree.

II.

The criticism of this week’s regulations goes something like this: AI can be used for good or for ill, but at its core it is a neutral, general-purpose technology.

To maximize the good it can do, regulators should work to get frontier technology into more hands. And to address harms, regulators should focus on strengthening defenses in places where AI could enable attacks, whether they be legal, physical, digital.

To use an entirely too glib analogy, imagine that the hammer has just been invented. Critics worry that this will lead to a rash of people smashing each other with hammers, and demand that everyone who wants to buy a hammer first obtain a license from the government. The hammer industry and their allies in universities argue that we are better off letting anyone buy a hammer, while criminalizing assault and using public funds to pay for a police force and prosecutors to monitor hammer abuse. It turns out that distributing hammers more widely leads people to build things more quickly, and that most people do not smash each other with hammers, and the system basically holds.

A core objection to the current state of AI regulation is that it sets an arbitrary limit on the development of next-generation LLMs: in this case, models that are trained with 10 to the 26th power floating point operations, or flops. Critics suggest that had similar arbitrary limits been issued earlier in the history of technology, the world would be impoverished.

Here’s Steven Sinofsky, a longtime Microsoft executive and board partner at Andreessen Horowitz, on the executive order:

Imagine where we would be if at the dawn of the microprocessor someone was deeply worried about what would happen if “electronic calculators” were capable of doing math faster than even the best humans. If that would eliminate the jobs of all math people. If the enemy were capable of doing math faster than we could. Or if the unequal distribution of super-fast math skills had an impact on the climate or job market. And the result of that was a new regulation that set limits for how fast a microprocessor could be clocked or how large a number it could compute?

What if at the dawn of the internet the concern over having computers connected to each other and becoming an all-knowing communications network resulted in regulations that set limits on packet size and speed, or the number of computers that could be connected to the network or to each other?

Of course, the Biden administration does not ban LLMs that are trained with exponentially more power than GPT-4 and other current frontier models. It requires disclosure of their training to the government, along with information about safety testing. Surely the industry can stand to at least disclose the existence of these models, and test them a bit before their release?

OK, comes the response, but who’s to say that compute is the right prism through which to consider the development of new models? For one, future advances might make it possible to train more powerful models using much less power. And in the meantime, compute can be gamed. The authors at AI Snake Oil, who come from academia, worry about “a two-tier system for foundation models: frontier models whose size is unconstrained by regulation and sub-frontier models that try to stay just under the compute threshold to avoid reporting.”

Overall, they write, the system creates burdens for non-corporate AI developers and pushes the industry away from openness. There are some legitimate trade-offs here.

Where I break ways with open-source advocates is their increasingly loud argument that this system massively benefits incumbents over challengers. It’s “a clear case of regulatory capture,” Sinofsky argues.

And it isn’t only industry players like Sinofsky making this case.

“History shows us that quickly rushing towards the wrong kind of regulation can lead to concentrations of power in ways that hurt competition and innovation,” reads a letter published by Mozilla and signed by dozens of academics, AI executives, and people who straddle that divide. “Open models can inform an open debate and improve policy making.”

The never-quite-stated-aloud, but heavily insinuated view embedded in this criticism is that this week’s regulations represent a diabolical scheme on the part of the top AI companies. They warned governments of phony or overstated risks; they told the government exactly how to regulate them; they pushed for those regulations to be implemented as quickly as possible, and now that most countries have signed on, they can ensure that almost no one can compete with them.

And people say that reporters are cynical.

III.

The idea that the leaders of top AI developers don’t believe what they’re telling the government, however delicious a conspiracy theory, doesn’t square with the history of AI to this point.

OpenAI was founded as a nonprofit in 2015 by a team that worried that, if developed incorrectly, AI could do great harm. Its later evolution into a for-profit company admittedly muddies those waters, and deserves scrutiny. But the basic idea that AI could be dangerous was a foundational principle of OpenAI from the start.

Meanwhile Anthropic, another leading AI developed that gets slagged as an “incumbent” here despite being founded in 2021, was started by a group of former OpenAI executives who thought OpenAI didn’t take safety seriously enough. It has so far attempted to differentiate itself primarily through safety initiatives, starting with an effort to embed in its LLM a do-no-harm “constitution” that the AI’s responses must always follow.

And for a self-interested conspiracy, AI safety sure has managed to attract a lot of prominent scientists with no obvious financial incentives to participate. Geoffrey Hinton, a pioneer in the development of deep learning systems, quit Google so he could speak out about the risks posed by its AI systems and others. Chinese AI scientists are sounding similar alarms.

Meanwhile, the nonprofit Open Philanthropy has been funding AI safety research for the better part of a decade after identifying it as a catastrophic risk. Nick Bostrom’s Superintelligence: Paths, Dangers, Strategies, a foundational text of AI existential risk, came out in 2014.

And perhaps most prominently of all, Elon Musk — a reliable hero to the regulate-nothing crowd — is one of the most prominent advocates for the idea that AI poses an existential risk to humanity. It’s what led him to co-found OpenAI and serve as one of its early funders, and it led him more recently to found his own AI company.

If AI safety is primarily the product of motivated reasoning from incumbents, how do we account for a challenger like X.ai, and the fact that Musk earlier this year joined those calling for a moratorium on the development of new models?

IV.

But perhaps you’re willing to stipulate that regulatory capture isn’t the point of top AI developers ringing alarm bells. It still sure seems like a nice byproduct.

It’s a truism in Silicon Valley that regulation favors incumbents. The more that countries issue rules for tech giants to follow around transparency, content moderation, and data privacy, the less that challengers are able to compete.

But you know what truly favors incumbents in Silicon Valley?

Not regulating them.

Governments complained endlessly about social networks after the 2016 US presidential election. But the United States passed not a single new regulation governing them.

And what was the result? Did a thousand new social networks bloom?

Not at all. We saw the rise of a single new global-scale social network, in TikTok. Various states and perhaps eventually the federal government are now seeking to ban the app from existence.

Snap can’t turn a profit to save its life. The first of the Twitter clones is already dead, and more are sure to follow.

And about 3.14 billion use one of more of Meta’s apps every day — and its latest social product, Threads, is poised to supplant Twitter in text-based social networks.

It’s not just social networks. Ask Spotify if allowing Apple to run its own streaming music service without paying platform fees has made the market for digital music more competitive.

Ask publishers if allowing Google to own every aspect of the digital advertising marketplace has made digital media more competitive.

Ask sellers if allowing Amazon to manipulate prices made e-commerce more competitive.

This is the great competitive dividend to the free market of not regulating the incumbent: that giants are bigger than ever; their negative externalities are paid for by taxpayers and citizens; and even still Marc Andreessen complains that we are slouching toward communism.

And so you’ll forgive me if I fail to panic when reading that leading tech companies, including some of those same giants, have voluntarily agreed to share safety testing information with the federal government. Only by the frail standards of American regulators can such a gentle request for information be considered draconian. And if and when Biden’s compute standard for model regulation stops working, nothing is to stop him or a future president from updating it with a new executive order.

Of course, the government’s role here deserves scrutiny of its own. It’s fair to worry that governments will seek to regulate the outputs of these models along political lines, as they arguably have already. (Whether they were asked to or not, top developers have set various rules against using LLMs to create political messaging or images of politicians.)

But on balance, the exponential leaps in models’ abilities from generation to generation suggest that it is regulation — and not self-imposed industry caution — that is warranted here. Given the stakes, it is arguably the least that the government can do.

And given the history of American tech regulation, it might even be the most that the government does.

Elsewhere in AI: The Biden administration today issued draft rules “that would require federal agencies to evaluate and constantly monitor algorithms used in health care, law enforcement, and housing for potential discrimination or other harmful effects on human rights.” (Khari Johnson / Wired)

On the podcast this week: I tell Kevin about my trip to the White House and share clips of my interviews with Biden administration officials. Then, Harvard Law School professor Rebecca Tushnet joins to explain the legal setback artists faced this week after a ruling in their copyright case against AI image generators. And finally, an invigorating round of HatGPT.

Apple | Spotify | Stitcher | Amazon | Google | YouTube

Governing

Google antitrust trial: Ben Gomes, former head of search, said that his team was getting too involved with ads in 2019, according to internal emails. (Davey Alba and Leah Nylen / Bloomberg)

Google’s most profitable search queries for the week of September 22nd in 2018 included three iPhone-related queries and five insurance-related ones. (David Pierce / The Verge)

Mozilla’s CEO Mitchell Baker said that betting on Yahoo for the Firefox browser deteriorated users’ search experience. Who could have guessed??(Leah Nylen / Bloomberg)

Elizabeth Reid, a vice president of search at Google, denied that the company’s AI chatbot Bard was rushed out after Microsoft announced it was integrating generative AI into Bing. (Leah Nylen / Bloomberg)

An Apple internal presentation in 2013 called Android a “massive tracking device”, saying that its privacy practices were better than Google’s. In fairness, most Android phones are relatively small tracking devices. (Adamya Sharma / Android Authority)

Many graphic videos and images online are being falsely billed as material related to the Israel-Hamas conflict, but are actually from other tragedies. (Angelo Fichera / The New York Times)

TikTok is front and center in the conflict, sparking a debate over the app’s role in shaping public opinion among young people. (Drew Harwell and Taylor Lorenz / Washington Post)

People are facing pressure on social media to post about the conflict, but many face backlash and negative comments, and some fear for their jobs. (Emma Goldberg and Sapna Maheshwari / The New York Times)

The FTC is accusing Amazon of accepting ads labeled as “defects” to boost profits and deleting internal communications using the “disappearing message” feature on Signal. (Leah Nylen and Matt Day / Bloomberg)

The agency also alleged that Amazon used a secret algorithm, named “Project Nessie”, that pushed up prices by more than $1 billion. (Diane Bartz, Arriana McLymore and David Shepardson / Reuters)

IAC submitted comments to the US Copyright Office warning that unless the government protects copyrighted material from being used by generative AI, original content will “wither and die.” (Sara Fischer / Axios)

The US Supreme Court will rule this term on whether a public official can block individuals on social media. There appeared to be no consensus on the question during recent oral arguments. (Ann E. Marimow / Washington Post)

Despite having bipartisan support, Congress has repeatedly failed to pass legislation designed to keep children safe online. One problem is that a lot of it violates the First Amendment! (Tim Wu / The Atlantic)

At a New Jersey high school, some girls discovered that AI-generated nudes of them were being circulated by boys in group chats, leading to investigations by the school and local police. (Julie Jargon / The Wall Street Journal)

New research shows that bomb threats, death threats and harassment commonly follow targeted posts from the popular reactionary Libs of TikTok X account. An awful and necessary story about a true social media menace. (Will Carless / USA Today)

Google and Match Group reached a settlement that will allow Match to implement payment systems other than Google’s. (Sarah Perez / TechCrunch)

Apple may have to scale back its fees on certain app subscriptions in the App Store, after the Dutch Authority for Consumers & Markets said they violate EU rules. (Samuel Stolton / Bloomberg)

The European Data Protection Board issued a binding decision banning Meta from processing personal data for behavioral advertising. (Joseph Duball / IAPP)

After an Instagram Reel of Indian prime minister Narendra Modi singing via AI, posted as a joke, went viral, some are saying AI cloning could help break down language barriers and win him votes. (Nilesh Christopher / Rest of World)

Industry

TikTok executives are hoping that the live-streaming boom will generate more revenue for the app, leaning on tipping revenue during streams and a cut of sales from shopping. (Erin Woo / The Information)

YouTube is cracking down on ad blockers and is disabling videos for users who use them, saying they violate the platform’s terms of service. (Emma Roth / The Verge)

YouTube Premium’s price is now increasing internationally in Argentina, Australia, Austria, Chile, Germany, Poland, and Turkey. (Abner Li / 9to5Google)

Google’s mobile-first indexing initiative is now complete after seven years, and continuing to reduce legacy desktop Googlebot crawling. (Barry Schwartz / Search Engine Land)

Who is responsible for ruining the internet? The impact of SEO experts who manipulate search results and Google’s changing algorithm have placed users in a confusing and arguably deteriorating search landscape. (Amanda Chicago Lewis / The Verge)

LinkedIn says it now has more than 1 billion users, and debuted an AI chatbot for Premium users, powered by OpenAI’s GPT-4. (Hayden Field / CNBC)

Sheryl Sandberg and husband Tom Bernthal set up Sandberg Bernthal Venture Partners, a new vehicle to invest their personal capital. (Sylvia Varnham O'Regan and Natasha Mascarenhas / The Information)

Some companies looking to cut costs by using open-source alternatives to OpenAI are realizing that the alternatives could actually be more expensive. (Stephanie Palazzolo / The Information)

Those good posts

For more good posts every day, follow Casey’s Instagram stories.

(Link)

(Link)

(Link)

Talk to us

Send us tips, comments, questions, and your favorite examples of regulation making markets more competitive: casey@platformer.news and zoe@platformer.news.