Excellent article. Missing one very key component. Ai agents and robots will soon be widely available for purchase. Lending will spring up in lock step. Many of us already have multiple agents working for us providing orders of magnitude more leverage than anyone planned for.

Financial will soon allow for one to purchase a robot or agent teams and send it to work for you. Not just knowledge work. Robots are already doing an increasingly large number of jobs once thought to be the sole province of humans. For the first time one can own and control the means of production personally—not through share ownership.

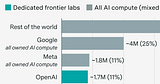

Open Ai, Anthropic, Google, Boston Scientific, et,al are building the work force of the future.

Many people would rather not work. Others would like to work less. Some want to get more done.

Welcome to the world of Ai leverage and the new slave class.

There will be two groups owners and owned.